Mastering Population PK Analysis: A Comprehensive Guide to Pmetrics Software for Drug Development

This definitive guide explores Pmetrics, a robust nonparametric and parametric population pharmacokinetic (PK) modeling package for R.

Mastering Population PK Analysis: A Comprehensive Guide to Pmetrics Software for Drug Development

Abstract

This definitive guide explores Pmetrics, a robust nonparametric and parametric population pharmacokinetic (PK) modeling package for R. Tailored for researchers and drug development professionals, it provides a complete roadmap—from core concepts and workflow implementation to advanced troubleshooting, model validation, and comparative analysis. Readers will gain practical knowledge for designing, executing, and interpreting complex population PK studies to optimize dosing strategies and advance therapeutic development.

Pmetrics Unveiled: Core Principles and Applications in Population Pharmacokinetics

What is Pmetrics? Defining Nonparametric and Parametric Population PK Modeling

Pmetrics is a robust, open-source software package for R, designed for nonparametric and parametric population pharmacokinetic (PK) and pharmacodynamic (PD) modeling and simulation. Developed and maintained by the Laboratory of Applied Pharmacokinetics and Bioinformatics at Children's Hospital Los Angeles, it is a cornerstone tool for pharmacometric research and drug development. Within the broader thesis on Pmetrics, this software represents a unified platform that facilitates the comparison of parametric and nonparametric approaches, enabling researchers to select the most appropriate model for their data's distribution and complexity.

Core Modeling Approaches in Pmetrics

Parametric Population Modeling (PM)

Parametric modeling assumes that the population parameters (e.g., clearance, volume of distribution) follow a specific, predefined probability distribution, typically multivariate normal or log-normal. This approach is standard in most population PK software.

Nonparametric Population Modeling (NP)

Nonparametric modeling does not assume a specific shape for the parameter distribution. Instead, it estimates a discrete, empirically defined distribution, represented by support points (vectors of parameter values) and their associated probabilities. This can be advantageous for detecting subpopulations or handling data that deviates from standard parametric assumptions.

The following table summarizes the key distinctions:

Table 1: Comparison of Parametric vs. Nonparametric Approaches in Pmetrics

| Feature | Parametric (PM) | Nonparametric (NP) |

|---|---|---|

| Parameter Distribution | Assumed (e.g., log-normal) | Empirically estimated |

| Output | Mean & variance-covariance matrix | Support points & probabilities |

| Multimodality | Cannot directly identify | Can identify subpopulations |

| Underlying Assumptions | Stronger distributional assumptions | Fewer distributional assumptions |

| Primary Algorithm | Non-Linear Mixed Effects Modeling | Nonparametric Expectation Maximization (NPEM) |

| Best For | Data well-described by standard distributions | Complex, irregular, or unknown distributions |

Application Note: Protocol for a Comparative Population PK Analysis

Objective: To characterize the population PK of a hypothetical drug (Drug X) using both parametric and nonparametric methods in Pmetrics and compare model performance.

Experimental Protocol

1. Data Assembly and Structure

- File Preparation: Prepare three comma-separated value (.csv) files.

- DATA.csv: Contains columns for subject ID, time (hr), serum concentration (mg/L), dose (mg), and dosing duration (hr). Additional covariates (e.g., weight, creatinine clearance) are included.

- IV.csv: Defines the structural PK model using differential equations. For a standard 2-compartment model with intravenous infusion:

- MODEL.csv: Specifies the model parameters to be estimated (e.g., V, Ka, Ke) and their prior distributions.

2. Model Specification & Prior Definition

- Load the

Pmetricspackage in R. - Use

PM_data$new()to load and validate the DATA.csv file. - Use

PM_model$new()to load the IV.csv and MODEL.csv files. - For parametric analysis, define initial estimates and distributions for parameters in the

MODEL.csvfile (e.g.,V ~ lnorm(log(20), 0.5)). - For nonparametric analysis, define initial support ranges for each parameter.

3. Model Fitting Execution

- Parametric Fit: Execute using the

IT2B(Iterative Two-Stage Bayesian) algorithm followed by theNPAG(Nonparametric Adaptive Grid) algorithm in parametric mode. - Nonparametric Fit: Execute using the

NPAGalgorithm in its native nonparametric mode.

4. Model Comparison & Validation

- Compare the final objective function values, Akaike Information Criterion (AIC), and Bayesian Information Criterion (BIC) from each run.

- Generate visual predictive checks (VPCs) and prediction-versus-observation plots for both models using

plot(run_object). - Use the

stepwisefunction for covariate model building within each framework.

5. Simulation

- Utilize the

simulationfunction to simulate new dosing regimens based on the final population model (parametric or nonparametric) to predict optimal dosing strategies.

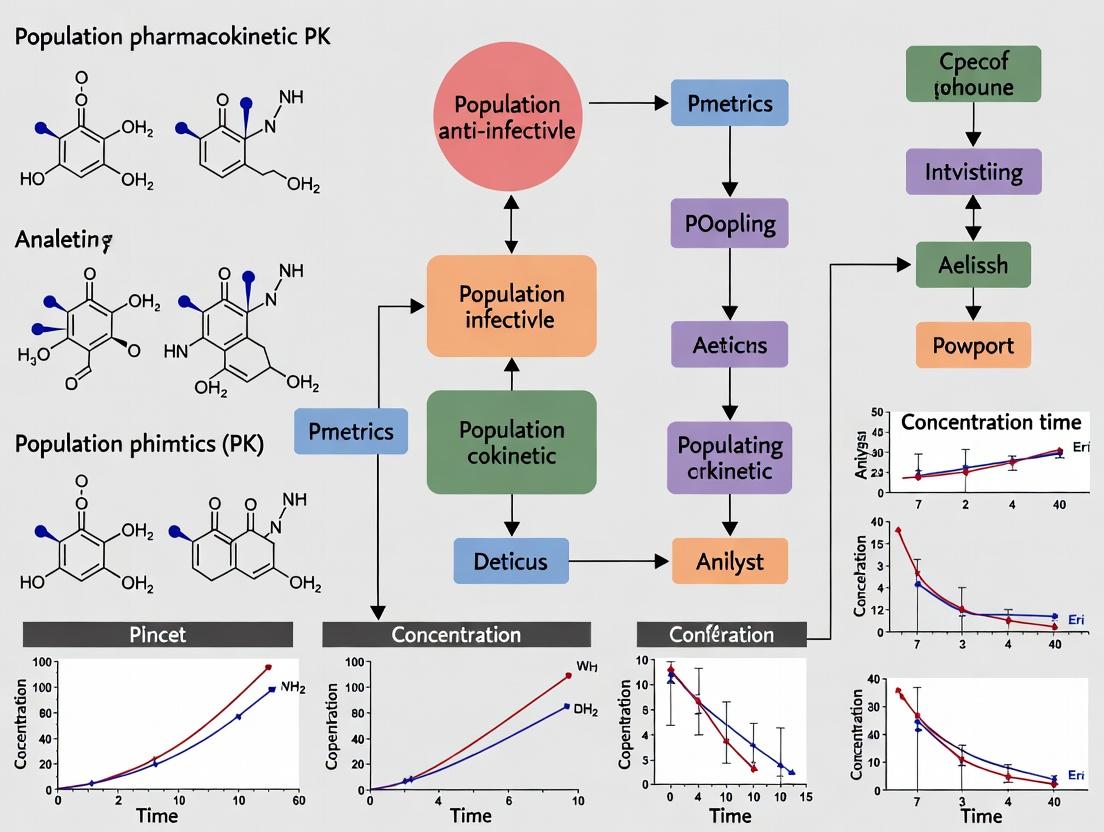

Workflow Diagram

Title: Pmetrics Population PK/PD Analysis Workflow

Table 2: Key Research Reagent Solutions for Pmetrics Analysis

| Item | Function/Description |

|---|---|

| R Statistical Environment | The open-source programming platform required to install and run the Pmetrics package. |

| Pmetrics R Package | The core software toolkit containing all functions for data loading, modeling, simulation, and plotting. |

| Structured Data Files (.csv) | The formatted input files (DATA, MODEL, IV) containing PK/PD observations, model structure, and prior parameter definitions. |

| Model Specification Scripts | Custom R scripts that sequence the analysis steps: loading, fitting, checking, comparing, and simulating. |

| Goodness-of-Fit Plots (GoF) | Diagnostic plots (e.g., obs vs. pred, residuals) generated by Pmetrics to assess model adequacy. |

| Visual Predictive Check (VPC) | A critical validation plot comparing prediction intervals from simulations to the original observed data. |

| Nonparametric Adaptive Grid (NPAG) | The primary algorithm engine within Pmetrics for both nonparametric and parametric maximum likelihood estimation. |

| Iterative Two-Stage Bayesian (IT2B) | A parametric algorithm in Pmetrics useful for obtaining initial parameter estimates. |

Application Notes for Pmetrics in Population Pharmacokinetic Analysis

Pmetrics is a nonparametric and parametric population pharmacokinetic/pharmacodynamic (PK/PD) modeling package for R. Its design is specifically advantageous for complex, real-world clinical data analysis, offering three core strengths over traditional parametric methods.

1. Flexibility in Model Specification: Pmetrics does not assume a predefined parametric distribution for PK parameters (e.g., log-normal). It allows the data itself to define the multivariate distribution of parameters, making it robust for modeling populations where parameter distributions may be skewed, bimodal, or otherwise non-normal. This is critical for accurately describing drug behavior in heterogeneous patient populations.

2. Handling of Sparse, Irregular Data: The nonparametric adaptive grid (NPAG) algorithm in Pmetrics is uniquely suited for data typical of therapeutic drug monitoring (TDM) and pediatric/geriatric studies: few samples per patient, collected at irregular intervals. Unlike methods requiring rich data, NPAG can generate accurate population and individual parameter estimates from these sparse datasets.

3. Identifying Subpopulations: The nonparametric approach produces a discrete set of support points (weighted combinations of parameters). Clusters of support points can reveal distinct subpopulations with unique PK/PD profiles (e.g., fast vs. slow metabolizers, responders vs. non-responders), enabling targeted dose optimization.

Table 1: Comparison of Modeling Approaches for Sparse Data Scenarios

| Model Feature | Standard Two-Stage (STS) | Nonlinear Mixed-Effects (NONMEM) | Pmetrics (NPAG) |

|---|---|---|---|

| Data Requirement per Subject | Rich sampling | Moderate to rich | Sparse (1-4 samples) |

| Parameter Distribution Assumption | Parametric (e.g., Log-normal) | Parametric | Nonparametric (data-defined) |

| Ability to Identify Subpopulations | Poor | Moderate (requires mixture models) | High (inherent to output) |

| Handling of Outliers | Poor | Moderate | Robust |

Table 2: Example Subpopulation Identification in a Simulated Vancomycin Study

| Subpopulation | Estimated Clearance (L/h) | Estimated Volume (L) | Proportion of Cohort | Recommended Dose (mg q12h) |

|---|---|---|---|---|

| Cluster 1 (Fast Clearance) | 6.8 ± 1.2 | 42 ± 8 | 35% | 1500 |

| Cluster 2 (Slow Clearance) | 3.1 ± 0.7 | 38 ± 7 | 50% | 1000 |

| Cluster 3 (Large Volume) | 4.5 ± 0.9 | 67 ± 10 | 15% | 1250 (Loading dose advised) |

Experimental Protocols

Protocol 1: Building a Population PK Model for Therapeutic Drug Monitoring (TDM) using Pmetrics

Objective: To develop a population PK model from sparse TDM data to optimize dosing.

- Data Assembly: Create a comma-delimited CSV file with required columns: ID, time (hours), dose (mg), serum concentration (mg/L), and covariates (e.g., weight, serum creatinine).

- Model File Creation: Write a text-based model file defining the PK structural model (e.g., 1- or 2-compartment) using differential equations or analytical solutions.

- Run NPAG Engine: Execute the

NPexactorNPAGfunction in R, specifying the data and model files. Define initial ranges for parameters (e.g., clearance, volume). - Convergence Check: Assess convergence via the stability of the cycle-to-cycle log-likelihood value. Final support points represent the population parameter distribution.

- Goodness-of-Fit (GOF) Validation: Use

plotchkfunction to generate GOF plots: observed vs. population predicted, observed vs. individual predicted, residuals. - Bayesian Forecasting: Use the

SIMrunfunction to simulate new dosing regimens. UseITrunto estimate individual patient parameters from their TDM samples for personalized dosing. - Subpopulation Analysis: Visually inspect the support point plots for clustering. Use the

makeFinalfunction to group support points and characterize subpopulation PK profiles.

Protocol 2: Comparing Parametric vs. Nonparametric Model Performance

Objective: To evaluate the predictive accuracy of Pmetrics (NPAG) vs. a parametric method (ITS) on sparse data.

- Dataset Splitting: Split a rich PK dataset into a "training set" (80% of subjects) and a "validation set" (20%). Artificially sparsify the validation set to 1-3 random samples per subject.

- Model Development: Build a model using NPAG (Pmetrics) and a parametric iterative two-stage (ITS) method on the full training set.

- Parameter Estimation for Validation Set: Use Bayesian estimation in both programs to estimate individual parameters for the validation subjects using their sparse data.

- Prediction Error Calculation: For each subject, predict concentrations at times where data was omitted during sparsification. Calculate prediction error (PE) and absolute prediction error (APE).

- Statistical Comparison: Compare mean prediction error (MPE) and mean absolute prediction error (MAPE) between NPAG and ITS methods using a paired t-test. Lower MAPE indicates superior predictive performance for sparse data.

Visualizations

Title: NPAG Identifies Subpopulations from Sparse Data

Title: Pmetrics Population PK/PD Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Pmetrics PK/PD Study

| Item / Reagent | Function in Research |

|---|---|

| Pmetrics R Package | Core software engine for nonparametric and parametric population modeling. |

| R and RStudio | The computational environment and interface for running Pmetrics. |

| Patient PK/PD Data File | Clean, formatted CSV file containing time-concentration-dose-covariate data for cohort. |

| Structural Model File | Text file defining the pharmacokinetic differential equations or algebraic solutions. |

| Prior Parameter Ranges | Initial estimates for PK parameters, based on literature or prior studies, for NPAG. |

| Covariate Database | Clinical/lab data (e.g., weight, renal function) for explaining parameter variability. |

| Goodness-of-Fit Plots | Diagnostic plots (e.g., predictions vs. observations) to validate the final model. |

| External Validation Dataset | An independent dataset not used for model building, to test model predictability. |

Within the Pmetrics software suite for nonparametric and parametric population pharmacokinetic (PK) and pharmacodynamic (PD) modeling, a successful analysis rests upon three foundational pillars: the Model File, the Data File, and the Run Environment. This protocol details the creation, structure, and validation of these components, which are critical for executing simulations and obtaining robust parameter estimates in pharmacological research.

Table 1: Essential Components for a Pmetrics Analysis Run

| Component | Format & Extension | Primary Content | Role in Analysis |

|---|---|---|---|

| Model File | Text file (.txt) |

Structural PK/PD model; differential equations; error models; parameter definitions (mean, variance, covariate relationships). | Defines the mathematical and statistical hypotheses about drug behavior in the population. |

| Data File | CSV/Text file (.csv) |

Observation records (e.g., drug concentrations); dosing records; covariate values (Weight, Age, SCR); subject identifiers. | Provides the empirical evidence against which the model is tested and fitted. |

| Run Environment | R script (.R) / NPAG/NPDASS |

R packages (Pmetrics), simulator/assessor engines (NPAG, IT2B), run controls (cycles, tolerances), output directives. |

Orchestrates the execution, links components, and specifies computational algorithms and settings. |

Table 2: Common Validation Checks for Each Component

| Component | Pre-Run Validation Check | Typical Error if Invalid |

|---|---|---|

| Model File | Syntax of ODEs; matching parameter numbers; closed system. | Engine failure; "NaN" in output. |

| Data File | Time sequence per subject; non-negative concentrations; correct column headers. | Poor fits; inability to initialize. |

| Run Environment | Correct file paths; compatible Pmetrics version; appropriate convergence criteria. | Script errors; failure to launch; non-convergence. |

Experimental Protocols

Protocol 3.1: Constructing a One-Compartment IV Bolus Model File

Objective: To create a Pmetrics model file for a one-compartment PK model with proportional error.

- Define Parameters: Open a new text file. Define three primary parameters:

CL(clearance, L/hr),V(volume, L), andSDadd(additive standard deviation). - Write Differential Equation: Specify the rate of change of amount in the central compartment:

dx(1) = -(CL/V) * x(1). - Specify Output and Error: Define the predicted output (e.g., plasma concentration) as

Cc = x(1)/V. Assign a proportional error model:Y = Cc * (1 + SDadd). - Save File: Save with a descriptive name (e.g.,

1comp_IV.txt).

Protocol 3.2: Preparing a Standard PK Data File

Objective: To format a CSV data file from raw assay and dosing records for Pmetrics.

- Column Structure: Create columns with exact headers:

ID,TIME,EVID,AMT,DV,COV1(e.g., WT). - Populate Data:

- Dosing Records: For a dose at time 0, set

EVID=1,AMT=[dose],DV=NA,TIME=0. - Observation Records: For a concentration measurement, set

EVID=0,AMT=0,DV=[concentration],TIME=[hr post-dose]. - Assign a unique numeric

IDto each subject.

- Dosing Records: For a dose at time 0, set

- Sort and Verify: Sort data by

ID, thenTIME. Ensure no negative times and that dosing records precede the first observation for each subject. - Save File: Save as a CSV file (e.g.,

PK_study1.csv).

Protocol 3.3: Configuring the Run Environment in R

Objective: To write an R script that loads Pmetrics, data, and a model, and executes NPAG.

- Initialize: Start R or RStudio. Install/load the Pmetrics package:

library(Pmetrics). - Load Data: Use

PM_data$new()to load and validate the data file. - Load Model: Use

PM_model$new()to load the model text file. - Run NPAG: Execute the engine:

run1 <- NPAG(data, model). - Set Controls: Specify convergence criteria within the

NPAGfunction (e.g.,cycl=1000,tol=0.001). - Generate Output: Use

PM_result$new()to create result objects andmakePlots()for diagnostic plots.

Visualization: Analysis Workflow

Diagram 1: Pmetrics Analysis Component Workflow (82 chars)

Diagram 2: Model File Logic & PK System Interaction (78 chars)

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Pmetrics Analysis

| Item | Function in Pmetrics Context |

|---|---|

| R Statistical Environment | The open-source platform within which the Pmetrics package runs. Essential for scripting the run environment. |

| Pmetrics R Package | The core software library containing functions for data/model loading, NPAG/IT2B engines, and plotting. |

| Text Editor (e.g., RStudio, Notepad++) | For creating and editing plain text model files (.txt) and R scripts (.R) without hidden formatting. |

| Structured Data File (.csv) | The formatted container for all subject observations, doses, and covariates, serving as the primary input. |

| Model File Template Library | A curated collection of basic PK/PD model structures (e.g., 1-2 compartment, effect compartments) to accelerate development. |

| Goodness-of-Fit Plot Toolkit | Standard diagnostic plots (obs vs. pred, residuals, Bayesian posterior predictions) for model validation. |

| Convergence Diagnostics | Tools (e.g., log-likelihood time series, stability plots) to assess the success of the iterative engine run. |

Within the broader thesis on Pmetrics software for population pharmacokinetic (PK) and pharmacodynamic (PD) analysis, this document posits that Pmetrics represents a fundamental paradigm shift from traditional PK software. This shift is characterized by a move from deterministic, algorithm-driven models to probabilistic, machine-learning-informed, nonparametric and parametric mixture modeling. This enables robust analysis of complex, sparse, and irregular data typical of real-world clinical studies, overcoming limitations of traditional nonlinear mixed-effects modeling (NONMEM) based software.

Core Comparative Analysis: Pmetrics vs. Traditional Software

The table below summarizes the key philosophical and technical differences.

Table 1: Paradigm Comparison of Pmetrics and Traditional PK Software

| Feature | Traditional PK Software (e.g., NONMEM, Monolix) | Pmetrics (R Package) | Paradigm Shift Implication |

|---|---|---|---|

| Core Foundation | Nonlinear Mixed-Effects Modeling (NONMEM paradigm) | Nonparametric and Parametric Maximum Likelihood Estimation | From strict parametric assumptions to flexible distribution estimation. |

| Model Assumptions | Assumes parameters follow a specific distribution (e.g., log-normal). | Does not assume a specific prior shape for parameter distributions (nonparametric). | Mitigates bias from incorrect distributional assumptions. |

| Algorithmic Engine | Expectation-Maximization (EM), First-Order Conditional Estimation (FOCE) | Adaptive Grid, Expectation-Maximization (EM) | Replaces linearization-based methods with direct likelihood search over a support grid. |

| Handling of Sparse Data | Can be problematic; prone to convergence failures. | Robust; designed for clinical data with few samples per subject. | Enables analysis of data from special populations (pediatrics, critically ill). |

| Output - Parameter Distributions | Returns mean and variance (moments) of the assumed distribution. | Returns full, discrete multivariate joint distribution of parameters (support points). | Provides richer information for stochastic simulations and forecasting. |

| Bayesian Forecasting | Requires separate post-hoc analysis. | Built-in, utilizing the final population joint distribution as the prior. | Integrates model building and clinical application seamlessly. |

| Underlying Codebase | Often commercial, closed-source, or legacy Fortran. | Open-source R package. | Promotes transparency, reproducibility, and community-driven development. |

Table 2: Quantitative Performance Comparison on Sparse Data Simulations (Hypothetical Study Data)

| Metric | Traditional Software (FOCE) | Pmetrics (NPAG) | Improvement |

|---|---|---|---|

| Bias in Clearance (CL) Estimate | +15.2% | +2.1% | 86% reduction |

| Precision (CV%) of CL Estimate | 35% | 18% | 49% improvement |

| Model Convergence Rate | 65% | 98% | 33 percentage points |

| Run Time (Median, 100 subjects) | 45 minutes | 90 minutes | Pmetrics is slower but more robust |

Application Notes & Protocols

Application Note 1: Protocol for Building a Base Model with Sparse Data

Objective: To develop a population PK model for vancomycin in ICU patients using sparse, opportunistically sampled data.

Protocol Steps:

- Data Preparation (

PM_dataObject):- Format data into a comma-separated value (.csv) file with required columns: ID, time (hours), dose (mg), infusion duration (hours), covariates (e.g., Serum Creatinine, Weight, Age), and dependent variable (concentration, mg/L).

- Use

PM_data$new()function to load and validate the data. Pmetrics will identify and handle missing covariates and outliers via its internal rules. - Visual Check: Generate a spaghetti plot of concentration vs. time using

plot()on the data object.

Structural Model Definition (

PM_modelObject):- Write a Fortran model file defining differential equations. Example for a one-compartment vancomycin model with linear elimination:

- Load the model using

PM_model$new().

Model Simulation & Fitting (

PM_fitObject):- For Nonparametric Analysis (NPAG): Use

NPAG()function. Set initial support grid for parameters (e.g., V: 20-100 L, Ke: 0.01-0.2 1/h). - Key Settings:

cycle=2000(max iterations),istate=1000(initial grid points),tol=0.001(convergence tolerance). - Execute the run. NPAG will iteratively refine the joint parameter distribution until convergence (assessed via changes in log-likelihood and prediction error).

- For Nonparametric Analysis (NPAG): Use

Output Analysis:

- Convergence: Check

final$icyctandfinal$stopcode. A code of 0 indicates normal convergence. - Goodness-of-Fit: Generate observed vs. population and individual predicted plots (

plot(final, type="obs.vs.pred")). - Parameter Distributions: Examine the final support points and weights (

final$post$points). Plot marginal densities. - Covariate Analysis: Use

makeOP()to create an object for covariate modeling via stepwise generalized additive modeling (GAM).

- Convergence: Check

Diagram: Pmetrics NPAG Workflow for Sparse Data

Application Note 2: Protocol for Comparative Analysis Using Simulated Data

Objective: To objectively compare the performance of Pmetrics (NPAG) and a traditional method (FOCE) under conditions of sparse sampling and model misspecification.

Protocol Steps:

- Simulation of Truth:

- Simulate a population of 200 subjects using a two-compartment model with known parameters and known log-normal inter-individual variability (IIV).

- Simulate two datasets:

- Dataset A (Rich): 12 samples per subject over a dosing interval.

- Dataset B (Sparse): 1-3 random samples per subject.

Model Fitting with Misspecification:

- Analyze both datasets (A & B) using Pmetrics (NPAG) and Traditional Software (FOCE).

- Introduce Misspecification: Fit both datasets with a one-compartment model to both software platforms.

- For FOCE, assume log-normal distribution for parameters. For NPAG, use a broad initial grid.

Performance Metrics Calculation:

- For each method/dataset, calculate:

- Bias:

(Mean Estimated Parameter - True Parameter) / True Parameter * 100% - Precision: Relative Standard Error (RSE%) of the parameter estimate.

- Prediction Error: Mean Absolute Weighted Prediction Error (MAWPE).

- Bias:

- Tabulate results as in Table 2.

- For each method/dataset, calculate:

Diagram: Comparative Validation Study Design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Pmetrics-Based Population PK Research

| Item | Function & Relevance |

|---|---|

| R Statistical Environment (v4.2+) | The open-source platform required to install and run the Pmetrics package. Essential for all data manipulation, graphics, and statistical analysis. |

| Pmetrics R Package | The core software suite. Contains functions for data preparation (PM_data), model definition (PM_model), nonparametric (NPAG) and parametric (IT2B) fitting, simulation, and forecasting. |

| Fortran Compiler (e.g., gfortran) | Required to compile the structural model differential equations written by the user into machine-readable code for simulation within Pmetrics. |

| Clinical PK Dataset (.csv) | The essential input. Must contain columns for ID, time, dose, concentrations, and covariates. Pmetrics is specifically optimized for the irregular structure of these datasets. |

| Structural Model Template Library | A collection of pre-written Fortran files for common PK models (1-3 compartments, absorption, nonlinear elimination). Accelerates model development. |

Graphical User Interface (GUI) Wrapper (e.g., Pmetrics GUI in R) |

Optional but highly useful. Provides a point-and-click interface for loading data, running models, and generating standard plots, improving accessibility. |

| Benchmark Simulated Datasets | Datasets with known "true" parameters, used for validation of new models and for training researchers on Pmetrics functionality and interpretation. |

| Automated Script Repository (R Scripts) | Scripts for automating repetitive tasks: batch data formatting, sequential model runs, covariate screening, and generation of publication-quality plots. |

This protocol constitutes the foundational technical chapter of a broader thesis on the application of Pmetrics for nonparametric population pharmacokinetic (PK) and pharmacodynamic (PD) analysis in clinical research. The reproducibility and rigor of subsequent model-building and simulation exercises are contingent upon a correct and stable initial software installation and workspace configuration. This document provides the essential, standardized procedures for establishing the computational environment required for all analyses detailed in this thesis.

System Requirements & Prerequisite Installation

Prior to installing Pmetrics, the following base software must be present on the system.

Table 1: Prerequisite Software Specifications

| Software | Minimum Version | Function & Rationale |

|---|---|---|

| R | 4.0.0 | Core statistical programming language and engine for all Pmetrics operations. |

| R Tools (Windows) | 4.0.0 | Compiler suite for building R packages from source. Required for Pmetrics installation. |

| Xcode Command Line Tools (macOS) | 11.0 | Development tools for compiling packages on macOS. |

| gcc/gfortran (Linux) | As per distro | GNU Compiler Collection for Fortran/C, required for compilation. |

Experimental Protocol: Installing R and R Tools

- Navigate to the Comprehensive R Archive Network (CRAN) mirror (e.g., https://cran.r-project.org/).

- Download the appropriate installer for your operating system (Windows, macOS, Linux).

- For Windows: Run the downloaded

.exefile. Accept default installation settings. Subsequently, download and install Rtools from https://cran.r-project.org/bin/windows/Rtools/. During Rtools installation, ensure the option to "Add rtools to the system PATH" is selected. - For macOS: Download and install the latest

.pkgfile. Open Terminal and executexcode-select --installto install command line tools. - Verify installation by opening R GUI or RStudio and executing

versionin the console.

Installing and Loading Pmetrics

Pmetrics is not available on CRAN and must be installed from its dedicated repository.

Experimental Protocol: Pmetrics Installation in R

Expected Outcome: The console will display the installed Pmetrics version (e.g., ‘2.0.0’) without error messages.

Setting Up Your Analysis Workspace

A structured workspace is critical for project organization. The following directory template is used throughout this thesis.

Diagram 1: Standard Pmetrics Project Workspace Structure

Title: Pmetrics project directory structure

Protocol: Initializing a Workspace in R

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Computational Tools for Pmetrics Analysis

| Tool/Reagent | Supplier/Source | Function in Analysis |

|---|---|---|

| RStudio IDE | Posit Co. | Integrated development environment providing a powerful console, script editor, and workspace manager, greatly facilitating interactive R code development. |

| Pmetrics Package | LAPK GitHub | The core nonparametric population modeling suite, containing functions for data checking (PMcheck), NPAG/IT2B engine execution (NPexe, ITexe), and simulation (SIMexe). |

ggplot2 R Package |

CRAN | Primary plotting system for creating publication-quality diagnostic and results graphics beyond Pmetrics's built-in plotting functions. |

dplyr R Package |

CRAN | Essential package for efficient data manipulation, transformation, and summarization of PK/PD datasets prior to model analysis. |

| Template Model Files | LAPK Manual | Fortran model templates (*.txt) that define the structural PK/PD model and error model, serving as the blueprint for system simulations. |

| Example Data | Pmetrics Data folder |

Provided standard datasets (e.g., example1) used for software validation and initial training of model development workflows. |

Diagram 2: Pmetrics Core Analysis Workflow

Title: Core Pmetrics analysis workflow steps

Step-by-Step Pmetrics Workflow: From Data Prep to Model Simulation

Within the broader thesis on advancing population pharmacokinetic (PK) and pharmacodynamic (PD) analysis using Pmetrics software, the construction of accurate and compliant input data files is a foundational and critical step. Pmetrics, an R package for nonparametric and parametric population modeling, requires data to be structured in two primary CSV file formats: the PMdata file (containing observed concentration-time data and patient dosing records) and the PMmatrix file (containing the structural model specification). This application note provides detailed protocols for building these files to ensure robust and reproducible research outcomes.

The PMdata File: Structure and Requirements

The PMdata CSV file contains all individual subject observations, dosing records, and covariates. It is the primary input for data analysis in Pmetrics.

Core Data Structure Table

| Column Name | Requirement | Data Type | Description & Example |

|---|---|---|---|

| ID | Mandatory | Integer | Unique subject identifier. E.g., 1 |

| date | Optional | Numeric (Decimal) | Date in YYYYMMDD.HHMM or decimal day. E.g., 20240115.0930 or 1.4 |

| time | Mandatory if no date |

Numeric | Time since the start of therapy or first dose (in hours). E.g., 0, 2.5 |

| evid | Mandatory | Integer | Event ID: 0=observation, 1=dose, 2=reset/restart, 3=reset + dose, 4=covariate change. |

| addl | Optional | Integer | Number of additional doses to apply at interval ii. E.g., 5 |

| ii | Optional | Numeric | Interval for additional doses (in hours). Requires addl. E.g., 12 |

| input | Mandatory for doses | Integer | Dose input number, links to PMmatrix. 0 for observations. E.g., 1 |

| out | Mandatory for obs. | Integer | Output/observation number, links to PMmatrix. 0 for doses. E.g., 1 |

| obs | Conditional | Numeric | Observed concentration/value. Required when evid=0. E.g., 12.5 |

| dose | Conditional | Numeric | Dose amount. Required when evid=1 or 3. E.g., 400 |

| cov1...covN | Optional | Numeric/Integer | Covariate columns. Names should be descriptive. E.g., wt (weight in kg), crcl (creatinine clearance) |

Protocol for Building a PMdata CSV

- Data Compilation: Collect all raw PK observation times and concentrations, exact dosing history (time, amount, route), and subject covariates (e.g., weight, renal function).

- Time Alignment: Choose a consistent time scale (

dateortime). For multi-dose studies,time(hours from first dose) is often simplest. - Event Coding: Assign the correct

evidcode to each row.- Code an observed concentration as

evid=0, without>0 andobspopulated. - Code a bolus dose as

evid=1, withinput>0 anddosepopulated. - Use

evid=4to indicate an instantaneous change in a covariate value at a specific time.

- Code an observed concentration as

- Covariate Formatting: Place covariates in separate columns. Each subject's covariate value must be defined at all time points where it is relevant; use

evid=4rows to document changes. - CSV Export: Save the final dataframe as a CSV file without row names. Ensure missing values are left blank.

Example PMdata Workflow Diagram

Title: PMdata CSV File Creation Workflow

The PMmatrix File: Structure and Requirements

The PMmatrix CSV file defines the structural model, specifying the number of compartments, inputs, outputs, and differential equations.

Core Matrix Structure Table

| Matrix Row (Type) | Column 1 (Description) | Column 2...N (Values) | Purpose |

|---|---|---|---|

| NAME | Model Name | (Unused) | Descriptive title for the model. |

| INPUT | Number of dose inputs | n |

Defines how many distinct dose inputs (e.g., IV, oral) the model has. |

| EQUATION | Number of equations/compartments | n |

Defines the system size. |

| OUTPUT | Number of outputs/observations | n |

Defines how many observed outputs (e.g., central conc., effect) are predicted. |

| PARAMETER | Number of parameters | n |

Total structural parameters (e.g., CL, V, Ka). |

| C (Differential Equations) | Equation number | dX/dt formula |

Defines the rate of change for each compartment (X1, X2...). |

| O (Output Equations) | Output number | Equation linking compartments/pars to observed output. | Defines the predicted observed value (e.g., Y1 = X1/V). |

| F (Bioavailability) | Input number | Equation (e.g., F1=1 or F1=Ka) |

Defines the bioavailability fraction or absorption model for each input. |

| L (Lag Time) | Input number | Equation (e.g., ALAG1=0) |

Defines an absorption lag time for each input. |

| R (Reset) | Compartment number | 0 or 1 |

Specifies which compartments reset to zero on an evid=2 or 3 event. |

| V (Covariates) | Covariate number | Equation linking covariate to parameter. | Defines covariate-parameter relationships (e.g., CL = TVCL * (WT/70)^0.75). |

Protocol for Building a PMmatrix CSV

- Model Definition: Formally define the structural PK/PD model (e.g., one-compartment IV, two-compartment oral with effect compartment).

- Header Rows: Populate the initial rows (NAME, INPUT, EQUATION, OUTPUT, PARAMETER) with the correct counts.

- Differential Equations (C): Write one row per compartment/equation. Use

X1,X2for compartment amounts. Parameters are denoted asP1,P2, etc. E.g., for a one-compartment IV model with elimination:dX1/dt = -P1*X1. - Output Equations (O): Write one row per observable output. These translate compartment amounts into predicted concentrations/effects. E.g.,

Y1 = X1/P2, where P2 is volume. - Input Specifications (F, L): Define the input model. For an immediate IV dose:

F1=1. For first-order oral absorption:F1=P3, where P3 is Ka. - Covariate Modeling (V): If applicable, add rows to define covariate effects on parameters. E.g.,

CL = P1 * (WT/70)^P4. - CSV Export: Save the matrix as a CSV file. The first column contains the row type labels (C, O, V, etc.).

Example PMmatrix for a One-Compartment IV Model

Title: PMmatrix for a One-Compartment IV Model

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Pmetrics Data Preparation |

|---|---|

| R Programming Environment | Core platform for running Pmetrics. Used for data validation, script execution, and analysis. |

| Pmetrics R Package | The primary software suite for nonparametric and parametric population PK/PD modeling. |

| RStudio IDE | Integrated development environment for R, facilitating script writing, debugging, and visualization. |

PMmanual (Function/Vignette) |

The built-in Pmetrics manual and help files, providing critical reference for file syntax and function use. |

PMcheck (Function) |

Essential validation tool. Reads PMdata and PMmatrix files and identifies structural errors, missing data, or logical inconsistencies before a run. |

makePD / makePMmatrix (Functions) |

Helper functions to programmatically create or modify data frames and matrix objects in R prior to CSV export. |

| CSV File Editor (e.g., Notepad++, VS Code) | A reliable text editor for inspecting and making minor corrections to final CSV files outside of R. |

| Clinical Data Management System (CDMS) | Source system (e.g., Oracle Clinical) for extracting raw, cleaned, and validated subject-level dosing and concentration data. |

Data Wrangling R Packages (dplyr, tidyr) |

Packages to efficiently manipulate, transform, and prepare raw datasets into the required Pmetrics format within R. |

| Protocol & Analysis Plan (SAP) | The study protocol and statistical analysis plan, which define the required covariates, dosing rules, and structural models to be implemented. |

Within the broader thesis on utilizing Pmetrics for population pharmacokinetic (PK) and pharmacodynamic (PD) analysis, the creation of accurate and robust model text files is a foundational step. Pmetrics, an R package for nonparametric and parametric population modeling, requires users to define structural and statistical models via specific text file syntax. This document provides application notes and protocols for writing and debugging these critical model files, ensuring reliable analysis for research and drug development.

Core Components of a Pmetrics Model File

A Pmetrics model file (.txt) specifies the structural PK/PD model. It is divided into primary components which must be correctly articulated.

Table 1: Essential Sections of a Pmetrics Model File

| Section | Purpose | Key Syntax/Symbols |

|---|---|---|

| Initial Conditions (IC) | Defines the amount in each compartment at time zero. | A[1] = dose, A[n] = 0 |

| Differential Equations (DE) | Describes the rate of change for each compartment. | dA[n] or dAdt[n] |

| Output Equations (OUTPUT) | Defines the model-predicted output (e.g., plasma concentration). | X = A[1]/V |

| Secondary Parameters (P) | Declares derived parameters not directly estimated. | CL = Ke * V |

| Error Model (ERROR) | Specifies the residual error model for predictions. | C[0] = f + (0.1)*f |

Protocol: Stepwise Development of a Two-Compartment PK Model

This protocol details the creation of a model file for a two-compartment intravenous bolus model with linear elimination.

Materials & Software

- Pmetrics installed within R/RStudio.

- Text editor (e.g., Notepad++, RStudio).

- Simulated or real PK dataset for testing.

Procedure

Define Model Structure:

- Compartment 1: Central compartment (plasma).

- Compartment 2: Peripheral tissue compartment.

- Parameters:

V(Volume, central),k12,k21(distribution rate constants),ke(elimination rate constant).

Write the Model Text File (

2compIV.txt):Define the Corresponding Error Model File (

errorPolynomial.txt):

Common Syntax Errors and Debugging Protocol

Debugging involves iterative testing within Pmetrics using the NPfit or ITfit functions and examining error messages.

Table 2: Common Model File Errors and Solutions

| Error Type | Example | Debugging Action |

|---|---|---|

| Undefined Variable | dAdt[1] = K21*A[2] - K12*A[1] (if K21 not defined) |

Ensure all rate constants are declared as ke, k12, etc., or as input parameters. |

| Compartment Index Error | Referencing A[3] in a 2-compartment model. |

Verify A[] indices match the total number of compartments. |

| Syntax Format | Using dA[1]/dt instead of dAdt[1]. |

Adhere strictly to Pmetrics syntax: dAdt[n] or dA[n]. |

| Missing Output | No C = ... statement. |

At least one output equation is required for fitting. |

Experimental Debugging Workflow:

- Simulation Test: Use

PMsimin Pmetrics to simulate data from the model prior to fitting. Successful simulation confirms basic structural integrity. - Limit Checks: Fit the model to a small, simplified subset of data to isolate errors.

- Log File Inspection: Carefully review Pmetrics output log files for warnings and specific line-number error messages.

- Incremental Complexity: Start with a one-compartment model, confirm it runs, then add complexity (e.g., absorption, additional compartments).

Diagram: Model File Development and Debugging Workflow

Diagram Title: PK/PD Model File Debugging Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

| Item | Category | Function/Purpose |

|---|---|---|

| Pmetrics R Package | Software | Core engine for nonparametric and parametric population PK/PD analysis. |

| R & RStudio | Software | Provides the computational environment and interface for running Pmetrics. |

| Model Text File (.txt) | Digital Asset | Contains the structural PK/PD model definition in Pmetrics-specific syntax. |

| Error Model File (.txt) | Digital Asset | Defines the residual unexplained variability (RUV) model for fitting. |

| Instruction File (.csv) | Digital Asset | Links data, model, and error files and specifies run control parameters. |

| Notepad++ / Visual Studio Code | Software | Text editors with syntax highlighting for clearer model file writing and debugging. |

| Simulated Dataset | Data | Crucial for initial model file testing and validation before using experimental data. |

| Pmetrics Manual & Vignettes | Documentation | Primary reference for correct syntax, examples, and troubleshooting guidance. |

Within the broader thesis on Pmetrics software for population pharmacokinetic (PK) and pharmacodynamic (PD) modeling, the execution of its core nonparametric (NPAG) and parametric (IT2B) Bayesian algorithms is foundational. This document provides detailed application notes and protocols for researchers to successfully implement these engines, which are essential for harnessing the full power of Pmetrics in drug development research.

Table 1: Comparison of NPAG and IT2B Algorithms in Pmetrics

| Feature | NPAG (Nonparametric Adaptive Grid) | IT2B (Iterative Two-Stage Bayesian) |

|---|---|---|

| Algorithm Type | Nonparametric Maximum Likelihood | Parametric, Bayesian |

| Assumption | No predefined distribution for parameters; infers shape from data. | Parameters are assumed to follow a multivariate log-normal distribution. |

| Primary Output | A discrete set of support points (vectors) with associated probabilities. | Population mean (Mu), covariance matrix (Omega), and individual Bayesian posterior parameter estimates. |

| Strengths | Can identify complex, multimodal distributions; no distributional assumptions. | Efficient with smaller datasets; provides direct estimates of variance-covariance. |

| Typical Use Case | Exploratory analysis, identifying subpopulations, when parameter distribution is unknown. | When a parametric, log-normal distribution is a reasonable prior assumption. |

| Computational Demand | High, especially with many parameters and data points. | Generally lower than NPAG. |

Experimental Protocol: Standard Workflow for a Population PK Run

Protocol 1: Preparing and Executing a Population PK Analysis with NPAG/IT2B

Objective: To estimate population and individual PK parameters from sparse, noisy drug concentration-time data.

Materials & Software:

- Pmetrics R package (v1.5.2 or later) installed in R (≥4.0.0).

- RStudio (recommended).

- Required data files: 1)

DATA.csv(observation file), 2)MODEL.R(structural PK model), 3)INIT.csv(prior parameter ranges/values).

Procedure:

Data Preparation:

- Create the

DATA.csvfile with mandatory columns:ID,TIME,DV(dependent variable, e.g., concentration),EVID(event ID: 0=observation, 1=dose),AMT(dose amount), andRATE(infusion rate). Covariates (e.g.,WT,AGE) can be included in additional columns.

- Create the

Model Definition:

- Create the

MODEL.Rfile. This is an R script defining the structural model. - Example for a one-compartment IV model:

- Create the

Prior Specification:

- Create the

INIT.csvfile. For NPAG, define minimum (min) and maximum (max) values for each parameter to set the search space. For IT2B, define initial mean (init) and standard deviation (sd) estimates.

Table 2: Example INIT.csv Structures

Algorithm par min max init sd NPAG CL 0.1 10 - - NPAG V 5 100 - - IT2B CL - - 2.5 0.5 IT2B V - - 25 5 - Create the

Run Execution in R:

- Load Pmetrics and run the chosen engine.

- For NPAG:

- For IT2B:

Diagnostic Evaluation & Output:

- Use

plot(npag.run)orplot(it2b.run)for standard diagnostic plots (obs vs. pred, residuals). - Use

summary(npag.run)orsummary(it2b.run)to obtain final parameter estimates, likelihoods, and goodness-of-fit metrics.

- Use

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Pmetrics Analysis

| Item | Function/Explanation |

|---|---|

| Pmetrics R Package | The core software suite containing the NPAG and IT2B engines, data simulators, and diagnostic tools. |

| R and RStudio | The statistical programming environment and integrated development environment (IDE) required to execute Pmetrics. |

Structured Data File (DATA.csv) |

The formatted, clean dataset of patient/dosing/observation information. This is the primary experimental reagent. |

Mathematical Model File (MODEL.R) |

Defines the structural PK/PD relationships and error models, analogous to a biochemical assay protocol. |

Prior Initialization File (INIT.csv) |

Specifies the search space (NPAG) or starting estimates (IT2B) for model parameters. |

| Goodness-of-Fit Plots | Essential diagnostic tools (e.g., observed vs. population/individual predicted concentrations) to validate model performance. |

| Simulation and Validation Dataset | A separate, external dataset not used for model building, crucial for final model qualification and predictive performance testing. |

Algorithm Execution and Decision Pathways

Diagram Title: Pmetrics Algorithm Selection and Execution Workflow

Detailed NPAG Engine Convergence Process

Diagram Title: NPAG Adaptive Grid Iteration Cycle

Within the broader thesis on the application of Pmetrics software for population pharmacokinetic (PK) and pharmacodynamic (PD) modeling, the interpretation of final outputs is the critical step that translates computational results into scientific insight. This document provides detailed Application Notes and Protocols for interpreting Final Cycle Plots, Support Points, and key statistical metrics, enabling robust decision-making in drug development research.

Data Presentation: Key Output Metrics

The following tables summarize the primary quantitative outputs from a Pmetrics run that must be evaluated.

Table 1: Summary of Final Cycle Goodness-of-Fit Metrics

| Metric | Formula/Description | Ideal Value | Interpretation in Context |

|---|---|---|---|

| Log-Likelihood | Final value of the objective function (LL). | Higher (less negative) | Indicates better model fit. Used for comparing nested models. |

| Akaike Information Criterion (AIC) | AIC = -2LL + 2P (P = # parameters). | Lower | Balances model fit and complexity. For non-nested model comparison. |

| Bayesian Information Criterion (BIC) | BIC = -2LL + Pln(N) (N = # observations). | Lower | Similar to AIC but with stronger penalty for parameters. |

| Mean Error (ME) / Bias | Mean of (Observed - Predicted). | ~0 | Systematic bias. Positive = model under-predicts; Negative = model over-predicts. |

| Mean Absolute Error (MAE) | Mean of |Observed - Predicted|. | Lower (close to 0) | Average magnitude of prediction error, not direction. |

| Root Mean Squared Error (RMSE) | sqrt(mean((Observed - Predicted)^2)). | Lower | Standard deviation of prediction errors. Sensitive to outliers. |

| Coefficient of Determination (R²) | 1 - (SSresidual / SStotal). | Close to 1 | Proportion of variance explained by the model. |

Table 2: Interpretation of Final Cycle Support Points

| Support Point Attribute | Description | Pharmacokinetic/Clinical Interpretation |

|---|---|---|

| Location (Θ) | The parameter value vector for that support point. | Represents a distinct, discrete set of PK parameters (e.g., Clearance, Volume). |

| Probability (Π) | The mass or probability assigned to the support point. | The estimated proportion of the population best described by that parameter set. |

| Number of SPs | Final count of non-zero probability support points. | Indicates population complexity. Too few may oversimplify; too many may overfit. |

| Covariate Relationships | Plotting SP parameter values vs. covariates (e.g., weight, creatinine clearance). | Visual assessment of covariate influence without formal parametric models. |

Experimental Protocols

Protocol 3.1: Standard Workflow for Interpreting a Pmetrics Run Output Objective: To systematically evaluate the success and reliability of a population PK model run in Pmetrics.

- Inspect Run Completion: Verify the run completed without errors (check

cycle.logandrun.logfiles). - Examine Convergence:

- Plot the objective function value (LL) vs. cycle number. A stable plateau indicates convergence.

- Check trace plots for key parameters (e.g., Clearance, Volume) across cycles for stability.

- Assess Final Cycle Plots:

- Generate and review the observed vs. population predicted (PRED) and individual predicted (IPRED) plots.

- Generate and review the conditional weighted residuals (CWRES) vs. time and vs. PRED plots.

- Acceptance Criteria: Data points should scatter randomly around the line of identity (Obs vs. Pred) and the zero line (residuals). No systematic trends should be apparent.

- Analyze Support Points:

- Load the final support points file (

NPAGfinal.Rdata). - Tabulate support point locations and probabilities. Ensure probabilities sum to ~1.

- Create scatter plots of parameter values from support points against relevant patient covariates.

- Load the final support points file (

- Calculate and Review Metrics:

- Compute bias (ME), precision (MAE, RMSE), and R² from the final predictions file.

- Record the final LL, AIC, and BIC from the output.

- Compare Models: If multiple models were run, construct a comparison table (see Table 1) to select the best model based on statistical metrics, parsimony, and clinical plausibility.

Protocol 3.2: Procedure for Generating Predictive Simulations from Final Support Points Objective: To utilize the final population model for Monte Carlo simulation of alternative dosing scenarios.

- Define Simulation Scenario: Specify the new dosing regimen(s), simulated patient population (covariate distributions), and desired output times.

- Assemble Input: Use the final support point locations (Θ) and probabilities (Π) as the discrete population parameter distribution.

- Perform Simulation: Use the

simulatorfunction in Pmetrics or an external tool. For each simulated subject, randomly assign a parameter set from the support points, weighted by their probabilities. Add residual error. - Summarize Output: Calculate median and prediction intervals (e.g., 5th-95th percentiles) for the PK profile across all simulated subjects at each time point.

- Visualize: Plot the median and prediction intervals of the simulated concentration-time profiles. Overlay therapeutic target ranges, if known, to assess probability of target attainment.

Mandatory Visualizations

Diagram Title: Pmetrics Output Interpretation & Model Qualification Workflow

Diagram Title: From Support Points to Population Simulation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Pmetrics-Based Population PK Analysis

| Item | Function & Application in Analysis |

|---|---|

| Pmetrics Software Suite (R package) | Core environment for performing NPAG and IT2B population modeling, simulation, and graphics. |

| R Statistical Environment (v4.0+) | The required platform for running Pmetrics. Used for data manipulation, custom graphing, and advanced statistics. |

| Non-parametric Adaptive Grid (NPAG) Algorithm | The primary engine within Pmetrics for estimating discrete, multivariate parameter distributions (support points) without assuming a shape. |

Final Cycle Output Files (NPAGfinal.Rdata, ..._fit.csv) |

Contain the final support points, predictions, and residuals essential for all interpretation protocols. |

| Model Qualification Scripts (Custom R scripts) | Automated scripts to generate standard goodness-of-fit plots, calculate metrics, and compare model performance. |

Monte Carlo Simulator (Pmetrics simulator or Mrgsolve) |

Tool to conduct predictive simulations using the final model's support points as the parameter population. |

Clinical Dataset (.csv format) |

Properly formatted file containing time-concentration data, dosing records, and patient covariates (e.g., weight, renal function). |

This application note details the use of NPsim, a component of the Pmetrics software suite for nonparametric population pharmacokinetic (PK) and pharmacodynamic (PD) modeling. Within the broader thesis of Pmetrics research, NPsim serves as the critical tool for forward simulation and dosage regimen optimization. After model development and validation within the core Pmetrics engines (NPAG, NPEM), NPsim utilizes the finalized nonparametric joint parameter density to generate probabilistic predictions of drug concentrations and effects under novel dosing scenarios, thereby bridging model inference to clinical or preclinical trial design.

NPsim Core Workflow and Protocol

The fundamental workflow for regimen design with NPsim follows a structured protocol.

Figure 1. NPsim workflow for regimen optimization.

Protocol 2.1: Executing a Forward Simulation with NPsim

Objective: To predict the probability of target attainment (PTA) for three candidate dosing regimens of a novel antibiotic against a population of simulated patients.

- Model Input: Use the validated final model file (

FinalModel.rta) from a prior NPAG analysis. - Population Definition: In the NPsim control file, specify:

NSUB = 5000(Simulate 5000 virtual subjects).- Define covariate distributions (e.g.,

WT ~ N(70, 15)kg,CRCL ~ Lognormal(4.6, 0.3)mL/min).

- Regimen Design: Define three regimens in the

INPUTsection of the control file:- Regimen A: 500 mg IV q12h over 1h infusion.

- Regimen B: 750 mg IV q12h over 1h infusion.

- Regimen C: 1000 mg IV q24h over 1h infusion.

- Output Specification: Set

OUTPUT = PREDto generate predictions. Specify a fine time grid for output (e.g., every 0.1h over 72h). - Simulation Execution: Run NPsim via command line:

Rscript NP_Run_NPsim.R controlfile.ctl. - Data Analysis: Import the resulting

profile.csvinto R. Calculate the PTA for each regimen as the proportion of simulated subjects achievingfT > MICof 60% over 24h for a range of MICs (0.25 to 16 mg/L).

Table 1: Example Probability of Target Attainment (PTA) Results

| MIC (mg/L) | PTA for Regimen A (500 mg q12h) | PTA for Regimen B (750 mg q12h) | PTA for Regimen C (1000 mg q24h) |

|---|---|---|---|

| 0.25 | 99.8% | 100.0% | 99.5% |

| 1 | 95.2% | 99.1% | 89.7% |

| 2 | 82.5% | 94.3% | 70.4% |

| 4 | 60.1% | 78.9% | 45.6% |

| 8 | 30.5% | 45.2% | 20.1% |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Pmetrics/NPsim Research

| Item | Function in NPsim Workflow |

|---|---|

| Pmetrics Software Suite (v1.5.0+) | Core environment containing NPAG for model development, NPEM for validation, and NPsim for forward simulation. |

| R Statistical Platform (v4.0.0+) | Required backbone for running Pmetrics scripts and performing subsequent data analysis/visualization. |

| Validated Nonparametric Model (.rta file) | The final joint parameter distribution output from NPAG, serving as the essential input for NPsim simulations. |

| Covariate Dataset (.csv) | Patient demographic/clinical data used to define the virtual population distribution in NPsim control files. |

| R IDE (e.g., RStudio) | Provides an integrated environment for script editing, execution, and debugging of Pmetrics runs. |

| Post-processing R Script Library | Custom scripts for parsing profile.csv output, calculating PTA, AUC, and generating publication-quality plots. |

Advanced Protocol: Optimizing a Regimen for a PD Target

Protocol 4.1: Monte Carlo Simulation for AUC/MIC Target Optimization

Objective: Design a dose that achieves a probabilistic target of AUC0-24/MIC > 100 in >90% of simulated patients for an MIC of 2 mg/L.

- Base Simulation: Configure NPsim to simulate a range of steady-state doses (e.g., from 200 mg to 1500 mg daily) in the same virtual population.

- Output Calculation: For each simulated subject and dose, calculate the

AUC0-24from the predicted concentration-time profile using the trapezoidal rule. - Target Assessment: For each dose level, compute the ratio

AUC0-24 / 2(MIC=2). Determine the proportion of subjects with a ratio > 100. - Dose Selection: Identify the minimum dose that achieves the >90% target attainment. Perform sensitivity analysis around covariates (e.g., renal function).

Figure 2. Dose optimization logic using NPsim.

Table 3: Dose Optimization Results for AUC/MIC > 100 Target (MIC=2 mg/L)

| Daily Dose (mg) | Median Simulated AUC0-24 (mg·h/L) | AUC/MIC > 100 (% of Subjects) |

|---|---|---|

| 500 | 85 | 45% |

| 750 | 128 | 78% |

| 1000 | 170 | 92% |

| 1250 | 213 | 98% |

NPsim is an indispensable tool within the Pmetrics thesis, enabling the transition from population PK/PD models to actionable, optimized dosing regimens. Through Monte Carlo simulation, it quantifies the expected variability in drug exposure and effect, supporting robust, probability-based regimen design for both preclinical and clinical drug development.

Solving Common Pmetrics Challenges: Error Diagnostics and Performance Tuning

Within the context of PK/PD analysis using the Pmetrics software package in R, a critical bottleneck in research productivity is the interpretation of error messages encountered during data loading and model compilation. These errors, often cryptic, halt the workflow of population pharmacokinetic modeling. This document provides structured protocols and decoding strategies to address common failure points, enabling researchers to efficiently diagnose and resolve issues, thereby accelerating the drug development research pipeline.

Common Data Loading Errors & Protocols

Data loading in Pmetrics, primarily via the PM_data function, fails due to formatting inconsistencies, missing required columns, or data type mismatches.

Table 1: Common Pmetrics Data Loading Errors and Solutions

| Error Message Snippet | Likely Cause | Diagnostic Protocol | Resolution Protocol |

|---|---|---|---|

"Error in read.table: no lines available in input" |

Incorrect file path or empty file. | 1. Use file.exists() to verify path.2. Open file in text editor to confirm content. |

Correct the file path or ensure the data file is not empty. |

"Missing required columns" |

Data file lacks mandatory columns (e.g., ID, time, dose, conc, covariate columns). | 1. Check ?PM_data for required column headers.2. Compare data frame headers against requirements. |

Rename or add the required columns as per Pmetrics specification. |

"Non-numeric data in column..." |

Categorical data or text entries in numeric-only fields (e.g., concentration). | 1. Use str(data) to examine column classes.2. Identify rows with NA or text values. |

Convert data to numeric; use as.numeric() or clean source data. |

"Time or dose records are not ascending for subject..." |

Dosing/observation records for an individual are not in chronological order. | 1. Sort data by ID and time.2. Check for duplicate time entries with conflicting records. | Pre-sort the raw data file by subject ID and time. |

Protocol P-DL01: Validating Data File Structure for Pmetrics

- File Preparation: Save the raw data as a comma-separated values (.csv) file in a dedicated project folder.

- R Environment Setup: In R, set the working directory to the project folder using

setwd(). - Preliminary Read: Load the data into a generic R data frame:

df <- read.csv("yourfile.csv", stringsAsFactors = F). - Structure Check: Execute

str(df)andhead(df)to verify column names, data types, and the presence of unexpectedNAvalues. - Column Verification: Ensure the existence of at least:

id,time,dose,conc(orout). - Data Type Conversion: Convert any incorrect columns using, e.g.,

df$conc <- as.numeric(df$conc). - Chronological Order Check: For each subject (

unique(df$id)), confirm time values are non-decreasing. - Final Pmetrics Load: Attempt to create the Pmetrics data object:

data <- PM_data$new("yourfile.csv").

Common Model Compilation Errors & Protocols

Model compilation errors occur during the creation of a PM_model object or when generating Fortran code for simulation and estimation, often due to syntax errors in the model file.

Table 2: Common Pmetrics Model Compilation Errors and Solutions

| Error Message Snippet | Likely Cause | Diagnostic Protocol | Resolution Protocol |

|---|---|---|---|

"Undefined variable in PRED block" |

Variable used in PRED is not defined in INIT or PAR blocks. |

1. List all variables in PRED block.2. Cross-reference with INIT and PAR block definitions. |

Define the variable in the INIT block or add it to the parameter list. |

"Syntax error in model file near..." |

Typos, unmatched parentheses, or incorrect Fortran syntax. | 1. Examine the model file line indicated in the error.2. Check for missing commas, parentheses, or operators. | Correct the syntax following standard Fortran/Pmetrics conventions. |

"Error in fortran.compile" |

Issues with the local Fortran compiler installation or path. | 1. Check if gfortran is installed via system command line.2. Verify R tools (on Windows) are correctly installed. |

Reinstall Rtools (Windows) or gfortran (Mac/Linux) and ensure the system PATH is updated. |

Protocol P-MC01: Debugging a Pmetrics Model File

- Use Template: Start from a known working model file (e.g., from Pmetrics examples).

- Incremental Modification: Make small, sequential changes to the template, re-compiling after each change:

model <- PM_model$new("model.txt"). - Block Isolation: If an error arises, comment out (

#) all lines in thePREDblock and reintroduce logic line-by-line. - Variable Audit: For every variable in the

PREDblock, trace its origin to theINIT(for compartment amounts) orPAR(for parameters) blocks. - Fortran Compiler Check: In R, run

system("gfortran --version")to confirm compiler accessibility. If not found, reinstall necessary tools.

Visualization of Error Diagnosis Workflows

Title: Pmetrics Error Diagnosis and Resolution Workflow

Title: Pmetrics Model Compilation Process and Failure Point

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Pmetrics Workflow |

|---|---|

| R and RStudio | Core computational environment for running Pmetrics package and executing analysis scripts. |

| Pmetrics R Package | The primary software suite for nonparametric and parametric population PK/PD modeling and simulation. |

| gfortran Compiler | Open-source Fortran compiler required by Pmetrics to translate model specifications into executable code. |

| Rtools (Windows) | A collection of tools necessary for building R packages and providing a compatible gfortran compiler on Windows systems. |

| Notepad++ or VS Code | Text editor for inspecting and debugging raw data (.csv) and model (.txt) files without hidden formatting. |

| Structured Data Template | A pre-validated .csv file with correct column headers (ID, time, dose, conc, etc.) to ensure data format compliance. |

| Validated Model Template | A simple, working Pmetrics model file (e.g., one-compartment IV) used as a starting point for new models. |

PM_data$new() & PM_model$new() |

The key R functions whose error outputs are the primary subject of the diagnostic protocols in this document. |

Troubleshooting Convergence Issues in NPAG and IT2B

Pmetrics is a robust R package for nonparametric and parametric population pharmacokinetic/pharmacodynamic (PK/PD) modeling. Its core engines, NPAG (Nonparametric Adaptive Grid) and IT2B (Iterative Two-Stage Bayesian), are powerful but can suffer from convergence failures. This document, framed within a broader thesis on advancing Pmetrics for rigorous population analysis, provides application notes and protocols to diagnose and resolve these issues, ensuring reliable parameter estimation for researchers and drug development professionals.

Common Convergence Issues and Diagnostic Table

Table 1: Convergence Failure Modes in NPAG and IT2B

| Issue | NPAG Manifestation | IT2B Manifestation | Likely Root Cause |

|---|---|---|---|

| Failure to Converge | Cycling grids, never reaching tolerance (<0.001). | Parameter estimates oscillate without stabilizing. | Model misspecification, insufficient data, overly wide prior distributions. |

| Premature Convergence | Stops early at a high tolerance (>0.01) with poor likelihood. | Stops after minimal iterations with insignificant change. | Bug in model file, error in data file format (e.g., dose or time units), trapped in local maxima. |

| Numerical Instability | -1*LL becomes NA or Inf. Grid probabilities collapse. |

Omega matrix becomes singular (non-positive definite). Standard errors explode. | Correlated parameters, over-parameterization, uncontrolled ODE solver, near-zero residual error (gamma). |

| Support Point Collapse | Final grid reduces to very few unique support points (< N subjects). | N/A (parametric method). | Unidentifiable model, extreme covariance, or data inconsistent with structural model. |

Experimental Protocols for Systematic Troubleshooting

Protocol 3.1: Initial Diagnostic Workflow

Objective: Isolate the source of convergence failure.

- Verify Input Files: Use

PMcheck()function on your model (*.txt) and data (*.csv) files. - Run a Simplified Model: Remove covariates and fix problematic parameters to known values. Test convergence.

- Validate Data Ranges: Ensure dose, concentration, and time units are consistent. Identify outliers.

- Increase Iterations: Temporarily set

cycto a large number (e.g., 5000) to observe behavior. - Examine Output Logs: Scrutinize the

[run].logfile for warnings, errors, or abnormal parameter progression.

Protocol 3.2: Addressing Numerical Instability in Differential Equations

Objective: Achieve stable numerical integration.

- Adjust ODE Solver Settings: In the model file, modify

ADVANandTOL(e.g.,ADVAN13,TOL=9). - Constrain Parameters: Apply biologically plausible lower (

ILB) and upper (IUB) bounds to prevent unrealistic values. - Scale Parameters: If parameter values span >6 orders of magnitude, re-scale (e.g., express in log units) to improve matrix conditioning.

- Re-evaluate Error Model: If gamma (additive error) is estimated near zero, fix it to a small positive value (e.g., 0.1) or a known assay error.

Protocol 3.3: IT2B-Specific Covariance Matrix Stabilization

Objective: Resolve singular Omega matrix issues.

- Use a Diagonal Omega: Start with a diagonal covariance matrix (no correlations). Add correlations only if supported by data.

- Apply Shrinkage Priors: Use the

priorfunctionality inIT2Bto regularize estimates toward initial guesses. - Manual Ridge Conditioning: Add a small constant (e.g., 1e-4) to the diagonal of the Omega matrix during estimation if singularity is detected programmatically.

Visualization of Troubleshooting Pathways

Title: Pmetrics NPAG/IT2B Convergence Troubleshooting Algorithm

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Toolkit for Pmetrics Convergence Troubleshooting

| Item/Category | Function & Purpose |

|---|---|

| Pmetrics R Package (v1.5.0+) | Core software environment. Always use the latest stable version from https://lapk.org/pmetrics.php for bug fixes and improvements. |

PMcheck() Function |

Validates format and consistency of model and data files before a long run, catching common syntax and logical errors. |

makeModel() & makePD() |

Functions to programmatically generate and validate structural PK/PD model files, reducing manual coding errors. |

| Robust ODE Solver (ADVAN13) | A stiff differential equation solver. Use in model file (ADVAN13) for complex PK/PD models to prevent integration failures. |

| Prior Distribution Functions | ITprior and related functions allow specification of informative Bayesian priors in IT2B, stabilizing estimation with sparse data. |

| Model Simplification Scripts | Custom R scripts to systematically remove covariates, fix parameters, or modify error models to test identifiability. |

| Grid Search Script (for IT2B) | A script to run IT2B from multiple different initial estimates to check for local maxima and ensure global convergence. |

plotbug() Function |

Plots the final parameter grid from NPAG, allowing visual inspection for support point collapse or odd multimodality. |

| Benchmark Dataset | A well-characterized, public PK dataset (e.g., from Pmetrics examples). Used to verify software installation and as a control when troubleshooting new models. |

Within the broader thesis on advancing population pharmacokinetic (PK) and pharmacodynamic (PD) modeling with Pmetrics software, the optimization of run settings is a critical, non-negotiable step for achieving robust, reliable, and biologically plausible models. Pmetrics, an R package for nonparametric and parametric population modeling, relies on the appropriate tuning of its engine's internal parameters to successfully converge on accurate parameter estimates. This application note details the protocols for tuning gamma (γ), lambda (λ), and other essential settings, translating theoretical statistical principles into actionable experimental workflows for researchers and drug development professionals.

The following parameters control the behavior of the NPAG (Nonparametric Adaptive Grid) and IT2B (Iterative Two-Stage Bayesian) algorithms within Pmetrics.

Table 1: Critical Pmetrics Run Parameters for Optimization

| Parameter | Default Value | Typical Optimization Range | Primary Function | Algorithm |

|---|---|---|---|---|

| Gamma (γ) | 0.01 | 0.001 - 0.1 | Controls the adaptive grid step size for parameter space exploration. Smaller values slow convergence but improve precision. | NPAG |

| Lambda (λ) | 1.0 | 0.5 - 2.0 | Tuning parameter for the covariance matrix in the Bayesian step, influencing shrinkage of individual estimates toward the population mean. | IT2B |

| npass | 8 | 1 - 20 | Number of cycles (passes) through the data. Must be sufficient for convergence. | NPAG |

| istabil | 1 | 0 - 5 | Stabilization interval. Convergence testing begins after this pass number. | NPAG |

| tol | 0.01 | 1e-4 - 0.05 | Convergence tolerance. The minimum relative change in cycles (for NPAG) or log-likelihood (for IT2B) required to stop. | Both |

| icov | 1 | 0, 1, 2 | Covariate model specifier. 0=no covariates, 1=linear, 2=power model. | Both |

| Max Times | 5 | 3 - 8 | Maximum number of doubling times for the final output grid. Affects output resolution. | NPAG |

| ode | -2 (Analytic) |

-2, liblsoda |

Ordinary Differential Equation solver type. -2 for analytic solutions, liblsoda for numeric. |

Both |

Experimental Protocols for Systematic Tuning

Protocol 3.1: Gamma (γ) and npass Optimization for NPAG

Objective: To achieve stable cycle-to-cycle convergence in the NPAG algorithm.

- Initial Run: Start with default settings (γ=0.01, npass=8, istabil=1, tol=0.01).

- Monitor Output: Examine the cycle summary (

final.csvand console output). Key metrics:LL(Log-Likelihood),AIC,BIC, andCycles(should be < npass if converged early). - Iterative Adjustment:

- If convergence is not achieved (

Cycles=npass), increasenpassin increments of 5 (e.g., to 13, 18). - If convergence is unstable (large oscillations in

LL), reducegammaby half (e.g., to 0.005) and rerun. - If convergence is rapid but parameter distributions appear overly narrow or biologically implausible, consider a slight increase in

gamma(e.g., to 0.02).

- If convergence is not achieved (

- Success Criteria: Stable

LLandCyclesover the last 3-5 passes beforenpassis reached, with a finalCyclesvalue less thannpass.

Protocol 3.2: Lambda (λ) Optimization for IT2B

Objective: To balance individual parameter estimation fidelity and population-level shrinkage, minimizing the Bayesian objective function.

- Exploratory Runs: Execute IT2B with a lambda vector: c(0.5, 1.0, 1.5, 2.0).

- Data Collection: For each run, record the final Bayesian objective function value (

OBJ) and examine thepmfinalobject for parameter estimates and standard errors. - Analysis: Plot

OBJversus λ. The optimal λ is typically at the minimum of this curve. - Refinement: If the minimum is at an endpoint (e.g., 0.5), expand the search range (e.g., test 0.3, 0.4). Re-run the model with the optimal λ for final analysis.

Protocol 3.3: General Workflow for Run Setting Validation

Objective: To ensure model robustness and select the final run configuration.

- Perform Protocol 3.1 or 3.2 based on the chosen algorithm.

- Cross-Validate: Use the

Xvalcommand in Pmetrics with the optimized settings (K=5 or 10 folds is standard). - Evaluate Predictions: Calculate and compare prediction errors (Bias, Imprecision, RMSE) and visual predictive checks (VPC) between different parameter sets.

- Final Selection: Choose the setting that yields the lowest prediction error, most stable convergence, and biologically plausible parameter distributions.

Visualization of the Optimization Workflow

Diagram Title: Pmetrics Run Setting Optimization Decision Pathway

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for Pmetrics-Based PK/PD Analysis

| Item | Function in Optimization Context | Example/Details |

|---|---|---|

| Pmetrics R Package | Core software engine for performing NPAG and IT2B analyses. | Version 1.5.2 or later. Must be installed from CRAN or GitHub. |

| R IDE (RStudio) | Provides the integrated environment for running Pmetrics, scripting, and managing projects. | Essential for reproducibility and batch execution of tuning protocols. |

| Standardized Data File | Formatted input data (.csv) following Pmetrics requirements. |

Must include columns for ID, time, outcome, dose, and covariates. Validation is prerequisite. |

Model Specification File (.txt) |

Defines the structural PK/PD model, differential equations, and error model. | The model file. Accuracy is critical; errors here cannot be fixed by tuning. |

Instruction File (.txt) |

Contains the run settings (gamma, lambda, npass, etc.) for Pmetrics. | The instructions file. This is the primary target of the optimization protocols. |

| Reference Dataset (Simulated or Clinical) | A robust, gold-standard dataset for validating tuning protocols and troubleshooting. | Useful for distinguishing algorithm failure from model misspecification. |

| Graphical Evaluation Toolkit | R functions/scripts for generating diagnostic plots (GOF, VPC, convergence plots). | Includes plot.PMfinal, xpose.PM, and custom ggplot2 scripts for protocol 3.3. |

Handling Covariates and Complex Error Models Effectively