From Data to Drugs: How AI and Machine Learning Are Revolutionizing Antimicrobial Discovery

This article provides a comprehensive overview for researchers, scientists, and drug development professionals on the application of artificial intelligence (AI) and machine learning (ML) in predicting novel antimicrobial compounds.

From Data to Drugs: How AI and Machine Learning Are Revolutionizing Antimicrobial Discovery

Abstract

This article provides a comprehensive overview for researchers, scientists, and drug development professionals on the application of artificial intelligence (AI) and machine learning (ML) in predicting novel antimicrobial compounds. It explores the foundational principles driving this convergence, details the current methodologies and tools in use, addresses critical challenges in model development and data handling, and examines validation frameworks and comparative performance against traditional discovery pipelines. The synthesis offers a roadmap for integrating computational intelligence into the urgent fight against antimicrobial resistance (AMR).

The AI Arms Race Against Superbugs: Foundations of Computational Antimicrobial Discovery

The global antimicrobial resistance (AMR) crisis demands a paradigm shift in drug discovery. With traditional pipelines dwindling, AI and machine learning (ML) offer a transformative approach to prioritize novel compounds and decipher complex resistance mechanisms. This document provides application notes and protocols for integrating AI-driven prediction into antimicrobial research workflows.

Table 1: Global Burden and Discovery Pipeline Metrics (Current Estimates)

| Metric | Value | Source/Year | Implication |

|---|---|---|---|

| AMR-attributed deaths (annual) | ~4.95 million | (IHME, 2022) | Exceeds mortality from HIV/AIDS or malaria. |

| Drug-resistant infections (US, annual) | >2.8 million | (CDC, 2019) | Significant healthcare burden and cost. |

| Average cost to develop a new antibiotic | $1.5 billion | (Innovative Genomics Institute, 2023) | High financial disincentive for traditional development. |

| Clinical success rate (Phase I to Approval) | ~16.3% | (Biotechnology Innovation Org, 2021) | High attrition underscores need for better lead prioritization. |

| Time from discovery to market | 10-15 years | (WHO, 2023) | Too slow to address rapidly evolving resistance. |

| Novel antibiotic classes approved (2000-2022) | 12 | (Pew Trusts, 2023) | Critically insufficient innovation rate. |

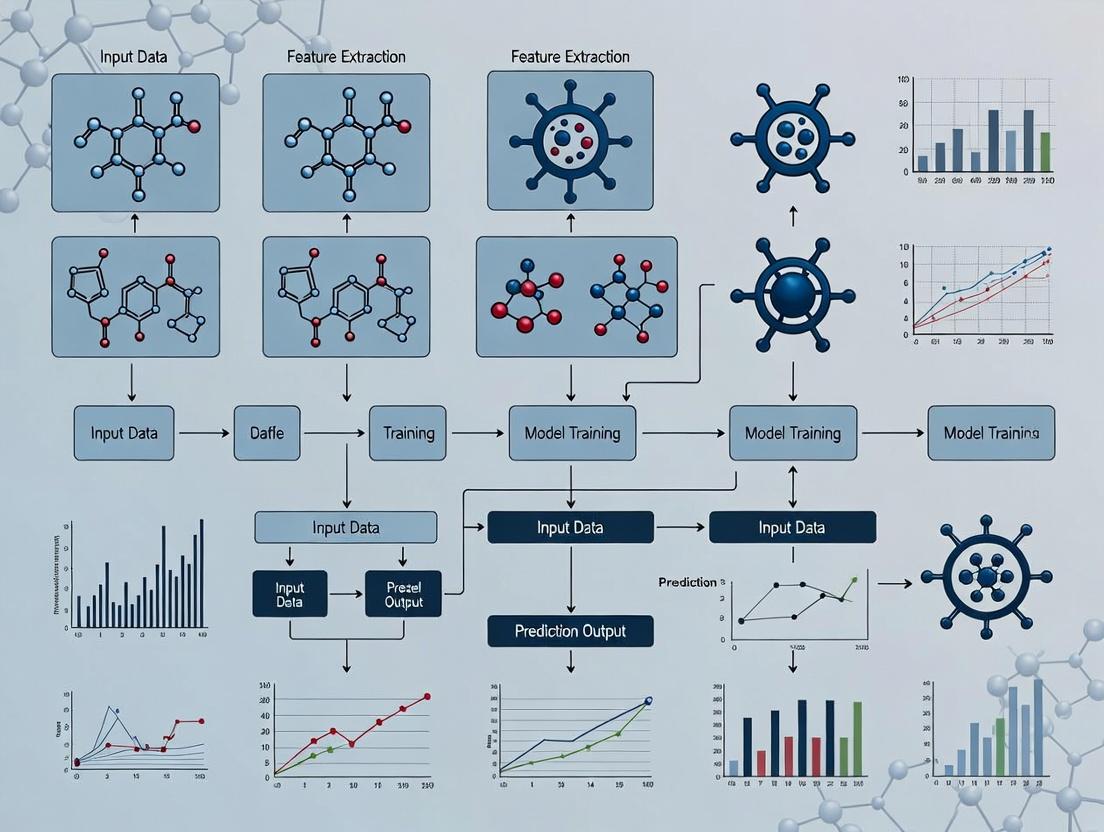

AI-Enhanced Workflow for Compound Prioritization

Protocol 1: In Silico Screening & Prioritization of Antimicrobial Compounds

Objective: To employ ML models for predicting antibacterial activity and cytotoxicity from chemical structures, reducing the initial experimental screening burden.

Materials & Reagents:

- Chemical Libraries: PubChem, ChEMBL, or proprietary small-molecule collections in SMILES or SDF format.

- AI/ML Platform: Access to platforms like DeepChem, Chemprop, or commercial equivalents (e.g., Atomwise, BenevolentAI).

- Computational Environment: High-performance computing cluster or cloud instance (e.g., AWS, GCP) with GPU acceleration.

- Training Data: Curated datasets of compounds with associated MIC (Minimum Inhibitory Concentration) values and mammalian cell cytotoxicity data (e.g., from ChEMBL or PubChem AID).

Procedure:

- Data Curation: Assemble a training dataset of known antimicrobials (actives) and inactive compounds. Clean data by removing duplicates, standardizing chemical representations (canonical SMILES), and applying a threshold (e.g., MIC < 10 µM) for "active" classification.

- Feature Representation: Convert SMILES strings into numerical features suitable for ML. Use molecular fingerprints (e.g., ECFP4, MACCS keys) or graph-based representations where atoms are nodes and bonds are edges.

- Model Training: Split data into training (~80%), validation (~10%), and hold-out test sets (~10%). Train a graph neural network (GNN) model (e.g., using Chemprop) to simultaneously predict antibacterial activity (binary classification) and estimated cytotoxicity (regression task). Use the validation set for hyperparameter tuning.

- Virtual Screening: Apply the trained model to a large, diverse virtual library of compounds. Generate predictions for activity and cytotoxicity.

- Prioritization: Rank compounds by a combined score favoring high predicted activity and low predicted cytotoxicity. Apply chemical diversity filters and "drug-likeness" rules (e.g., Lipinski's Rule of Five) to select a manageable hit list (e.g., 50-100 compounds) for in vitro validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Experimental Validation of AI-Predicted Hits

| Item | Function | Example/Supplier |

|---|---|---|

| Cation-Adjusted Mueller Hinton Broth (CAMHB) | Standardized medium for broth microdilution susceptibility testing against ESKAPE pathogens. | Hardy Diagnostics, BD BBL |

| Resazurin Sodium Salt | Cell viability indicator used in broth microdilution; color change from blue (non-fluorescent) to pink/fluorescent signals bacterial growth. | Sigma-Aldrich, Thermo Fisher |

| Human Hepatocyte Cell Line (e.g., HepG2) | In vitro model for primary cytotoxicity screening of hit compounds. | ATCC |

| CellTiter-Glo Luminescent Assay | Homogeneous method to determine cell viability based on quantitation of ATP, indicating metabolically active cells. | Promega |

| Galleria mellonella Larvae | In vivo model for preliminary toxicity and efficacy testing, bridging the gap between in vitro and mammalian studies. | BioSystems Technology |

| Membrane Permeabilization Assay Kit | Fluorescence-based kit to determine if compound's mechanism involves bacterial membrane disruption. | e.g., BacLight (Invitrogen) |

| β-lactamase Nitrocefin Hydrolysis Assay | Chromogenic test to identify compounds that inhibit β-lactamase enzymes, a key resistance mechanism. | MilliporeSigma |

Mechanistic Studies on Resistance & Compound Action

Protocol 2: Elucidating Mechanisms of Action (MoA) via Transcriptomics

Objective: To profile bacterial transcriptional responses to AI-predicted hits, inferring potential MoA and resistance pathways.

Procedure:

- Treatment: Grow a target bacterium (e.g., Acinetobacter baumannii) to mid-log phase. Treat with sub-inhibitory (¼ MIC) and inhibitory (1x MIC) concentrations of the AI-predicted compound. Include a DMSO/solvent control. Incubate for 30-60 minutes.

- RNA Extraction & Sequencing: Harvest cells, stabilize RNA (RNAlater), and extract total RNA. Prepare cDNA libraries and perform next-generation sequencing (Illumina platform).

- Bioinformatic Analysis: Map reads to the reference genome. Perform differential gene expression analysis (using DESeq2 or EdgeR). Genes with significant up/down-regulation are analyzed for enrichment in KEGG pathways or Gene Ontology terms.

- AI-Enhanced MoA Prediction: Input the differential expression signature into a pre-trained ML model (e.g., a classifier trained on transcriptomic profiles of compounds with known MoA) to predict the most likely mechanistic class of the novel hit.

Diagram 1: Transcriptomic MoA Analysis Workflow

Diagram 2: Key AMR Signaling Pathways in Gram-Negatives

Integrating AI for predictive modeling and mechanistic deconvolution creates a powerful, accelerated discovery engine. The protocols outlined here provide a tangible roadmap for researchers to leverage these tools, moving from in silico prediction to validated lead candidates with greater speed and reduced cost, which is essential to outpace the AMR crisis.

Core AI/ML Concepts for Antimicrobial Compound Prediction

The application of AI in antimicrobial discovery hinges on several foundational machine learning paradigms. Quantitative performance metrics from recent key studies are summarized below.

Table 1: Performance Metrics of ML Models in Antimicrobial Discovery

| Model Type | Dataset (Example) | Key Metric | Reported Value | Primary Use Case |

|---|---|---|---|---|

| Graph Neural Network (GNN) | 23,358 molecules (Stokes et al., 2020, Cell) | ROC-AUC (vs. E. coli) | 0.897 | Predicting growth inhibition from molecular structure |

| Random Forest | 2,335 compounds (MIC data) | Mean Squared Error (MSE) | 0.85 (log(MIC)) | Quantitative Structure-Activity Relationship (QSAR) |

| Convolutional Neural Network (CNN) | 10,000+ peptide sequences | Accuracy (Binary Classification) | 94.2% | Antimicrobial peptide (AMP) identification |

| Recurrent Neural Network (RNN) | SMILES strings of 1M+ compounds | Top-100 Hit Rate (Virtual Screen) | 12.7% | De novo molecule generation with desired properties |

| Transformer (e.g., BERT-like) | PubChem & ChEMBL entries | Precision@50 (Lead Compound ID) | 0.68 | Multi-property optimization & lead candidate ranking |

Application Notes & Detailed Protocols

Protocol 2.1: Implementing a GNN for Molecule Property Prediction

Objective: To train a Graph Neural Network for predicting Minimum Inhibitory Concentration (MIC) from molecular graph representation.

Research Reagent Solutions (Software/Tools):

| Item | Function | Example/Version |

|---|---|---|

| Deep Graph Library (DGL) or PyTorch Geometric | Framework for building and training GNNs on graph-structured data. | DGL 1.0+, PyG 2.0+ |

| RDKit | Cheminformatics toolkit for converting SMILES to molecular graphs (node/edge features). | RDKit 2022.09+ |

| PubChemPy or ChEMBL API | Programmatic access to chemical structure and bioactivity data for training. | N/A |

| scikit-learn | For data preprocessing, splitting, and baseline model comparison. | scikit-learn 1.2+ |

| TensorBoard or Weights & Biases | Experiment tracking and visualization of training metrics. | N/A |

Methodology:

- Data Curation: Query the ChEMBL database for compounds with reported MIC values against a target pathogen (e.g., Staphylococcus aureus). Filter for high-confidence data, resulting in a dataset of ~15,000 molecules. Represent each molecule as a graph: atoms are nodes (features: atom type, degree, hybridization), bonds are edges (features: bond type).

- Model Architecture: Implement a 4-layer Graph Convolutional Network (GCN) or Message Passing Neural Network (MPNN). Use global mean pooling to generate a graph-level embedding. Follow with two fully connected layers (ReLU activation, Dropout=0.3) to produce a single continuous output (predicted log(MIC)).

- Training: Use Mean Squared Error (MSE) loss and the Adam optimizer (lr=0.001). Employ 5-fold cross-validation. Implement early stopping based on validation loss. Typical training requires 200-300 epochs.

- Validation: Compare predicted vs. experimental log(MIC) on a held-out test set. Calculate key metrics: R², MSE, and Mean Absolute Error (MAE).

Protocol 2.2: High-Throughput Virtual Screening with a CNN on Molecular Images

Objective: To screen large chemical libraries (>1M compounds) using a CNN trained on 2D molecular fingerprint images for rapid prioritization of potential antimicrobials.

Methodology:

- Data Preparation: Generate 2D molecular structures from SMILES strings using RDKit. Render each structure into a standardized 224x224 pixel RGB image. Label images as "active" or "inactive" based on a MIC threshold (e.g., ≤ 32 µg/mL).

- Model Training: Utilize a pre-trained CNN (e.g., ResNet-50) and perform transfer learning. Replace the final classification layer. Fine-tune the model on a dataset of ~50,000 labeled molecular images.

- Screening Pipeline: Process the entire library through the trained CNN. Rank compounds by the model's confidence score (probability of being "active"). The top 0.1% of candidates (i.e., ~1000 from 1M) are selected for in vitro validation.

Visualizations

GNN for Molecular Property Prediction

Virtual Screening with CNN on Molecular Images

AI/ML Thesis Context in Antimicrobial Discovery

Application Notes: Integration of Multi-Modal Data for AI-Driven Antimicrobial Discovery

The predictive power of machine learning (ML) models in antimicrobial research is critically dependent on the quality, representation, and integration of three core data types. These modalities provide complementary views of the complex chemical-biological interaction landscape.

Chemical Structures define the compound's identity and physico-chemical properties. Modern AI approaches utilize Simplified Molecular-Input Line-Entry System (SMILES), molecular fingerprints (e.g., ECFP4), or graph-based representations (atom-bond graphs) as model inputs. These enable the prediction of target engagement, toxicity, and absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles.

Genomic Sequences of both pathogen and host are essential. For pathogens, sequences identify essential genes, potential drug targets, and resistance mechanisms (e.g., beta-lactamase genes). For the host, they help predict potential off-target effects and cytotoxicity. Whole-genome sequencing data is used to train models that predict strain-specific vulnerability.

Biological Assays provide the ground-truth functional data. This includes minimum inhibitory concentration (MIC) values, time-kill curves, cytotoxicity measures (e.g., CC50), and biofilm disruption assays. These quantitative readouts serve as training labels for supervised ML models.

The integrative AI pipeline maps chemical features and genomic contexts to assay outcomes, enabling the in silico prioritization of novel compounds with predicted high efficacy and low resistance potential.

Table 1: Core Data Types and Their AI-Ready Representations

| Data Type | Primary Formats | Key Features for ML | Common Predictive Use |

|---|---|---|---|

| Chemical Structure | SMILES, SDF, InChI, Molecular Graph | ECFP Fingerprints, 3D Conformers, Quantum Chemical Descriptors | Activity Prediction, ADMET, Synthesis Planning |

| Genomic Sequence | FASTA, FASTQ, GFF, VCF | k-mers, Gene Ontology Terms, SNP/Resistance Gene Presence | Target Identification, Resistance Prediction, Host Toxicity |

| Biological Assay | MIC (µg/mL), IC50 (nM), % Inhibition, Time-Kill Data | Dose-Response Curves, High-Content Imaging Features | Model Training & Validation, Potency & Selectivity Scoring |

Protocols

Protocol 2.1: Generating an AI-Ready Dataset from Public Repositories

This protocol details the compilation of a standardized dataset for training antimicrobial activity prediction models.

Materials:

- Computer with internet access and Python/R environment.

- Access to public databases: ChEMBL, PubChem, PATRIC, NCBI GenBank.

Procedure:

- Compound Curation:

- Query ChEMBL for compounds with recorded MIC values against a target organism (e.g., Staphylococcus aureus ATCC 29213).

- Filter for entries with exact MIC values (not ">" or "<") and a defined standard type (e.g., "MIC").

- Download associated SMILES strings and standardize them using RDKit (Python) or ChemAxon tools (neutralize, remove salts, generate canonical tautomer).

- Genomic Context Addition:

- Retrieve the genome assembly (FASTA) for the assay organism from PATRIC or GenBank using the reported strain identifier.

- Annotate the genome using RASTtk or Prokka to identify essential genes and known resistance determinants.

- Encode the presence/absence of a pre-defined set of resistance genes (e.g., mecA, blaZ) as a binary feature vector for each compound assay record.

- Assay Data Integration:

- Merge the compound data with MIC values. Convert MIC to a binary label (e.g., "Active": MIC ≤ 8 µg/mL; "Inactive": MIC > 8 µg/mL) for classification tasks, or use log-transformed MIC for regression.

- For each compound-strain pair, create a final data row containing: i) ECFP4 fingerprint (2048 bits), ii) genomic feature vector, iii) MIC label/value.

- Dataset Splitting:

- Perform scaffold splitting using the Bemis-Murcko framework to separate compounds with distinct core structures into training (70%), validation (15%), and test (15%) sets. This assesses model generalizability to novel chemotypes.

Protocol 2.2: Training a Graph Neural Network for Dual-Input Activity Prediction

This protocol describes training a model that directly operates on molecular graphs and genomic features.

Materials:

- Hardware: GPU (e.g., NVIDIA V100) recommended.

- Software: Python with PyTorch or TensorFlow, Deep Graph Library (DGL) or PyTorch Geometric.

Procedure:

- Data Preparation:

- Load the dataset from Protocol 2.1.

- For each compound, convert the SMILES to a molecular graph object where atoms are nodes (featurized by atomic number, degree, etc.) and bonds are edges (featurized by bond type).

- Normalize the genomic binary feature vector.

- Package (Graph, Genomic Vector, Label) as a data object.

- Model Architecture:

- Implement a Message Passing Neural Network (MPNN) with 3-5 layers to learn molecular representations.

- After the final graph convolution layer, perform a global mean pooling to generate a fixed-size molecular embedding vector.

- Concatenate this molecular vector with the genomic feature vector.

- Pass the concatenated vector through a final multi-layer perceptron (MLP) with a single output node (sigmoid activation for classification).

- Training:

- Use binary cross-entropy loss and the Adam optimizer.

- Train for a fixed number of epochs (e.g., 200), evaluating accuracy on the validation set after each epoch.

- Apply early stopping if validation loss does not improve for 20 consecutive epochs.

- Save the model with the best validation performance.

- Evaluation:

- Apply the saved model to the held-out scaffold-split test set.

- Calculate metrics: Area Under the Receiver Operating Characteristic Curve (AUROC), Area Under the Precision-Recall Curve (AUPRC), accuracy, and F1-score.

- Compare performance against a baseline model (e.g., Random Forest on ECFP fingerprints only).

Visualizations

AI-Driven Antimicrobial Discovery Data Workflow

Dual-Input GNN Model Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Enhanced Antimicrobial Research

| Item | Function in Research | Application in AI/ML Context |

|---|---|---|

| RDKit (Open-Source Cheminformatics) | Handles chemical informatics: SMILES parsing, fingerprint generation, molecular descriptor calculation. | Critical for standardizing chemical structure inputs and generating feature representations (e.g., ECFP) for ML models. |

| PyTorch Geometric / Deep Graph Library | Specialized libraries for deep learning on graph-structured data. | Enables building and training Graph Neural Networks (GNNs) that directly process molecular graphs as input, capturing topological information. |

| AutoML Platforms (e.g., H2O, TPOT) | Automated machine learning frameworks that optimize model selection and hyperparameter tuning. | Accelerates the development of baseline predictive models from tabular data (fingerprints + genomic features), saving researcher time. |

| Cation-Adjusted Mueller Hinton Broth (CAMHB) | Standardized growth medium for broth microdilution antimicrobial susceptibility testing (AST). | Generates the ground-truth MIC data required for training and validating supervised learning models. Assay consistency is paramount. |

| Resazurin Sodium Salt (AlamarBlue) | Oxidation-reduction indicator for cell viability; turns from blue to pink/fluorescent upon reduction by metabolically active cells. | Enables high-throughput colorimetric/fluorometric readouts in microtiter plates, generating large-scale dose-response data for ML datasets. |

| Genomic DNA Extraction Kit (e.g., Qiagen DNeasy) | Isolates high-purity genomic DNA from bacterial cultures for sequencing. | Provides the genomic sequence input for resistance gene annotation and feature generation, linking genotype to phenotypic resistance. |

| In Silico ADMET Prediction Tools (e.g., SwissADME, pkCSM) | Web servers that predict pharmacokinetic and toxicity properties from chemical structure. | Used to filter AI-predicted active compounds for desirable drug-like properties before in vitro validation, increasing success rates. |

Major Research Initiatives and Key Players in AI-Driven Antibiotic Discovery

Within the broader thesis on AI and machine learning for antimicrobial compound prediction, this application note details the major research initiatives and key players propelling the field of AI-driven antibiotic discovery. The convergence of high-throughput screening, genomics, and advanced computational models is creating a paradigm shift, addressing the global antimicrobial resistance (AMR) crisis.

Table 1: Major Global Initiatives in AI-Driven Antibiotic Discovery

| Initiative Name | Lead Organization(s) | Key AI/ML Focus | Primary Funding Source | Notable Output (as of 2024) |

|---|---|---|---|---|

| AI-Driven Antibiotic Discovery (AIDD) Project | MIT, Harvard, Broad Institute | Deep learning on chemical structures & genomic data | DARPA, NIH | Halicin, Abaucin |

| Antibiotic Discovery (EBI) Program | EMBL-EBI, Wellcome Sanger Institute | Genome mining & phenotypic screening prediction | Wellcome Trust | ~100 novel microbial gene clusters prioritized |

| CARB-X AI Accelerator | Boston University, multiple biotechs | Lead optimization & toxicity prediction | BARDA, Wellcome Trust, NIAID | 5 portfolio projects utilizing AI platforms |

| REVIVE Initiative | University of Tübingen | Graph neural networks for natural product discovery | German Federal Govt. | Iboxamycin and other candidates identified |

| Collaborative AI for Antibiotic Discovery | Google DeepMind/Isomorphic, Eli Lilly | AlphaFold for target structure, generative chemistry | Corporate R&D | Public release of predicted structures for AMR targets |

Table 2: Key Quantitative Metrics from Recent Initiatives (2022-2024)

| Metric | Halicin Discovery (MIT) | Abaucin Discovery (MIT/McMaster) | Iboxamycin Discovery (Tübingen) |

|---|---|---|---|

| Compounds Screened (in silico) | >107 million | ~7,500 molecules (focused library) | >38,000 natural product fragments |

| Hit Rate (Experimental vs. In Silico) | ~1.3% (from 23 candidates) | ~9% (from 240 candidates) | ~0.8% (from 300 candidates) |

| Time from Prediction to In Vitro Validation | ~3 weeks | ~2 months | ~4 weeks |

| Potency (MIC) vs. Target Pathogen | E. coli: ~2 µg/mL | A. baumannii: ~2 µg/mL | S. aureus: 0.25 µg/mL |

| Mammalian Cell Cytotoxicity (CC50) | >64 µg/mL | >128 µg/mL | >256 µg/mL |

Experimental Protocols

Protocol:In SilicoScreening and Hit Identification Using a Graph Neural Network (GNN)

Application: Primary screening of chemical libraries for growth inhibition prediction. Based on: The methodology from Stokes et al., Cell, 2020 (Halicin).

Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Model Training:

- Utilize a dataset of ~2,335 known drug molecules with experimentally determined growth inhibition profiles against E. coli (e.g., from the Drug Repurposing Hub).

- Represent each molecule as a directed graph (atoms as nodes, bonds as edges).

- Train a GNN (e.g., with 3 convolutional layers) to map the molecular graph to a continuous growth inhibition score.

- Validate model on a held-out test set (e.g., 20% of data).

- Library Screening:

- Apply the trained model to a large in silico library (e.g., ZINC15, >100 million compounds).

- Generate predicted inhibition scores for all compounds.

- Hit Selection:

- Filter predictions based on: a. Score Threshold: Select top 1-2% of scoring compounds. b. Structural Clustering: Apply Tanimoto similarity clustering (<70% similarity) to ensure chemical diversity. c. Drug-Likeness: Enforce rules like Lipinski's Rule of Five.

- Output a final list of 50-200 candidate molecules for experimental validation.

- Experimental Validation:

- Procure or synthesize the top candidate compounds.

- Proceed to Protocol 3.2.

Protocol:In VitroValidation of AI-Predicted Antibacterial Candidates

Application: Confirm growth inhibition and determine Minimum Inhibitory Concentration (MIC).

Procedure:

- Bacterial Strain Preparation:

- Culture target pathogen (e.g., Acinetobacter baumannii ATCC 19606) overnight in Mueller-Hinton Broth (MHB) at 37°C.

- Dilute the overnight culture to a turbidity of 0.5 McFarland standard (~1-2 x 10^8 CFU/mL) in fresh MHB.

- Further dilute 1:100 in MHB to achieve a final inoculum of ~5 x 10^5 CFU/mL.

- MIC Assay (Broth Microdilution, CLSI M07):

- In a sterile 96-well polypropylene plate, add 100 µL of MHB to all wells.

- In Column 1, add 100 µL of the candidate compound at 200 µg/mL (in DMSO, final DMSO ≤1%).

- Perform two-fold serial dilutions across the plate (Columns 1-11). Column 12 is a growth control (broth + bacteria + DMSO, no compound).

- Add 100 µL of the prepared bacterial inoculum to all wells except the sterility control (Column 11, broth + compound only).

- Seal plate and incubate statically at 37°C for 16-20 hours.

- Analysis:

- Measure optical density at 600 nm (OD600) using a plate reader.

- The MIC is the lowest concentration of compound that inhibits ≥90% of visible growth compared to the growth control.

- Include standard antibiotics (e.g., ciprofloxacin) as positive controls.

Protocol: Mechanism of Action Prediction via Bacterial Cytological Profiling (BCP)

Application: Generate phenotypic signatures to predict compound's mechanism of action (MoA). Based on: Methodology from Wong et al., PNAS, 2023 (Abaucin).

Procedure:

- Sample Preparation:

- Grow E. coli (MG1655) to mid-log phase (OD600 ~0.3) in MHB.

- Treat cultures with AI-predicted compound at 5x MIC, DMSO (vehicle control), or known reference antibiotics (e.g., ciprofloxacin for DNA synthesis, chloramphenicol for protein synthesis).

- Incubate for 60-90 minutes at 37°C.

- Staining and Fixation:

- Fix cells with 2.8% formaldehyde + 0.04% glutaraldehyde for 15 min.

- Wash and stain with fluorescent dyes:

- Membrane: FM4-64FX (1 µg/mL)

- DNA: DAPI (1 µg/mL)

- Cell Wall: Wheat Germ Agglutinin (WGA), Alexa Fluor 488 conjugate (5 µg/mL)

- Imaging and Analysis:

- Image using a high-content fluorescence microscope with a 100x oil objective.

- Capture at least 10 fields of view per condition.

- Extract quantitative morphological features (cell length, width, staining intensity, nucleoid morphology) using image analysis software (e.g., CellProfiler).

- Use a pre-trained classifier (e.g., Random Forest) to compare the feature profile of the unknown compound to reference profiles, predicting its MoA (e.g., "cell wall synthesis inhibitor").

Visualizations

Title: AI-Driven Antibiotic Screening Workflow

Title: AI Antibiotic Discovery Ecosystem Map

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for AI-Driven Antibiotic Validation

| Item | Function/Benefit | Example Product/Source |

|---|---|---|

| Curated Chemical Libraries for Screening | Provide structurally diverse, drug-like molecules for in silico screening and in vitro validation. | ZINC15, ChemBL, Enamine REAL, Drug Repurposing Hub (Broad) |

| Ready-to-Use Bacterial Panels | Pre-assembled panels of clinically relevant, antibiotic-resistant pathogens for rapid MIC testing. | ATCC MP-10, NARSA Strains (BEI Resources) |

| Cytological Profiling Dye Kits | Optimized fluorescent dye cocktails for Bacterial Cytological Profiling (BCP) to predict Mechanism of Action. | BacLight RedoxSensor, FM dyes (Thermo Fisher), Live-or-Dye kits |

| High-Content Imaging-Compatible Plates | 96- or 384-well plates with optical bottoms for high-resolution, automated fluorescence microscopy. | CellCarrier-96 Ultra (PerkinElmer), µ-Plate 96 Well Black (ibidi) |

| Automated Liquid Handlers | Enable high-throughput, reproducible setup of MIC and synergy assays from nanoliter-scale compound stocks. | Echo Acoustic Liquid Handler (Beckman), D300e (Tecan) |

| Open-Source AI/Cheminformatics Platforms | Provide pre-built models and pipelines for molecular property prediction and virtual screening. | DeepChem, Chemprop, RDKit, Atomwise SMILE2Vec |

Inside the Algorithm: AI/ML Models and Workflows for Compound Prediction

Within the broader thesis on AI and machine learning for antimicrobial compound prediction, this application note charts the evolution of computational models used to predict biological activity from chemical structure. The journey from interpretable, feature-based traditional models to high-capacity, representation-learning deep neural networks represents a paradigm shift in computational drug discovery, offering unprecedented tools for tackling antimicrobial resistance (AMR).

Evolution of Predictive Modeling Approaches

Traditional Quantitative Structure-Activity Relationship (QSAR)

QSAR models establish a mathematical relationship between a set of predefined molecular descriptors (independent variables) and a quantitative biological activity (dependent variable).

Core Protocol: Developing a Classical 2D-QSAR Model

- Data Curation: Assay data for a congeneric series of antimicrobial compounds (e.g., minimum inhibitory concentration (MIC) against S. aureus).

- Descriptor Calculation: Use software like RDKit, PaDEL-Descriptor, or Dragon to compute molecular descriptors (e.g., logP, molar refractivity, topological indices, charge-based descriptors).

- Feature Selection: Apply methods like Genetic Algorithm, Stepwise Regression, or LASSO to select the most relevant, non-collinear descriptors to prevent overfitting.

- Model Building: Employ multivariate regression techniques (e.g., Multiple Linear Regression (MLR), Partial Least Squares (PLS)).

- Validation: Adhere to OECD principles. Use internal validation (e.g., Leave-One-Out Cross-Validation) and external validation with a hold-out test set. Report metrics: ( R^2 ), ( Q^2_{cv} ), ( RMSE ).

Table 1: Comparison of Traditional QSAR Modeling Algorithms

| Algorithm | Key Principle | Advantages for Antimicrobial Research | Limitations |

|---|---|---|---|

| Multiple Linear Regression (MLR) | Fits a linear equation to descriptor data. | Highly interpretable; clear contribution of each descriptor. | Prone to overfitting with many descriptors; assumes linearity. |

| Partial Least Squares (PLS) | Projects variables into latent factors maximizing covariance with activity. | Handles correlated descriptors well; robust for small datasets. | Interpretation of latent factors can be less intuitive. |

| Support Vector Machine (SVM) | Finds a hyperplane that maximally separates active/inactive compounds. | Effective for non-linear relationships; good for classification tasks. | Black-box nature; performance sensitive to kernel and parameters. |

Machine Learning (ML) and Deep Neural Networks (DNN)

ML models automatically learn complex patterns from data. DNNs, a subset of ML, use multiple layers of artificial neurons to learn hierarchical representations directly from raw or minimally processed input (e.g., SMILES strings, molecular graphs).

Core Protocol: Training a Graph Neural Network (GNN) for Activity Prediction

- Graph Representation: Represent each molecule as a graph ( G=(V, E) ), where atoms are nodes (V) with features (atom type, hybridization), and bonds are edges (E) with features (bond type).

- Model Architecture: Implement a Message Passing Neural Network (MPNN).

- Message Passing (Multiple Layers): Each node aggregates messages (feature vectors) from its neighbors.

- Readout/Global Pooling: After several message-passing steps, generate a fixed-size molecular graph representation by summing or averaging node features.

- Prediction Head: Pass the graph representation through fully connected layers to predict activity (e.g., pMIC).

- Training: Use a loss function (Mean Squared Error for regression) and optimizer (Adam). Employ techniques like dropout and early stopping to regularize the model.

- Evaluation: Assess on a temporal or scaffold-split test set to evaluate generalizability to novel chemotypes.

Table 2: Performance Metrics of Model Types on Public Antimicrobial Datasets

| Model Class | Example Model | Dataset (Example) | Task | Reported Metric (Typical Range) |

|---|---|---|---|---|

| Traditional QSAR | PLS | Staphylococcus aureus inhibitors (ChEMBL) | Regression (pMIC) | ( R^2_{test} ): 0.60 - 0.75 |

| Classical ML | Random Forest | ESKAPE pathogen panel | Classification (Active/Inactive) | AUC-ROC: 0.75 - 0.85 |

| Deep Learning (Graph) | Attentive FP | FDA-approved drugs vs. Mycobacterium tuberculosis | Classification | AUC-ROC: 0.82 - 0.90 |

| Deep Learning (Sequence) | SMILES Transformer | Broad-spectrum antimicrobial peptides | Regression | ( R^2_{test} ): 0.70 - 0.80 |

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Computational Tools for Antimicrobial Predictive Modeling

| Tool/Solution | Function | Application in Workflow |

|---|---|---|

| RDKit | Open-source cheminformatics library. | Molecule standardization, descriptor calculation, fingerprint generation, and substructure search. |

| PyTorch Geometric / DGL | Libraries for deep learning on graphs. | Building and training Graph Neural Network (GNN) models directly on molecular graph data. |

| TensorFlow/Keras | Deep learning frameworks. | Building sequential (SMILES-based) and dense neural network models. |

| scikit-learn | Machine learning library. | Data preprocessing, feature selection, traditional ML model implementation, and hyperparameter tuning. |

| ChEMBL / PubChem | Public bioactive compound databases. | Source of curated, experimental bioactivity data (e.g., MIC, IC50) for model training and validation. |

| MOE (Molecular Operating Environment) | Commercial software suite. | Integrated platform for molecular modeling, descriptor calculation, and QSAR model building. |

| Streamlit / Dash | Web application frameworks. | Creating interactive web interfaces for deploying trained models for internal team use. |

Visualized Workflows and Relationships

Title: Predictive Modeling Approach Evolution

Title: Graph Neural Network Training Protocol

Within the broader thesis on AI and machine learning for antimicrobial compound prediction, feature engineering stands as the critical bridge between raw molecular data and predictive model performance. The selection and construction of molecular descriptors and representations directly determine a model's ability to learn structure-activity relationships (SAR) for antimicrobial activity. This document provides detailed application notes and protocols for generating, evaluating, and utilizing these features.

Core Molecular Descriptor Categories & Data

Table 1: Quantitative Overview of Common Molecular Descriptor Categories for Antimicrobial Prediction

| Descriptor Category | Typical Number of Features | Computational Cost | Interpretability | Example Key Features for Antimicrobial Activity |

|---|---|---|---|---|

| 1D/2D: Constitutional & Topological | 50 - 300 | Low | High | Molecular weight, atom counts, bond counts, Wiener index, Zagreb indices, molecular connectivity indices. |

| 2D: Electronic & Charge-Based | 100 - 500 | Low-Medium | Medium | Partial charge descriptors, dipole moment, HOMO/LUMO energies (estimated), polar surface area. |

| 3D: Geometrical & Shape-Based | 50 - 200 | High | Low-Medium | Principal moments of inertia, radius of gyration, molecular volume, 3D-Wiener index. |

| 3D: Quantum Chemical | 20 - 100 | Very High | Medium-High | Accurate HOMO/LUMO energies, ionization potential, electron affinity, molecular electrostatic potential (MEP) maps. |

| Fingerprint-Based (Binary) | 512 - 4096+ bits | Low | Low | MACCS Keys (166 bits), ECFP4/FCFP4 (1024+ bits), Path-based fingerprints. |

Table 2: Performance Comparison of Descriptor Types in Representative AMR Studies (2022-2024)

| Study Focus (Model Type) | Primary Descriptor Type | Dataset Size | Reported Metric (e.g., AUC-ROC) | Key Insight |

|---|---|---|---|---|

| Gram-negative vs. Gram-positive (RF) | ECFP4 + RDKit 2D Descriptors | ~5,000 compounds | 0.87 | Hybrid fingerprint-descriptor vectors outperformed either alone. |

| Anti-MRSA CNN | Graph Representation (Atom/Bond Adjacency) | ~10,000 compounds | 0.91 | Learned features from graphs surpassed pre-defined descriptors. |

| AMP Prediction (Transformer) | SMILES String (Sequence) | ~15,000 peptides | 0.93 | Contextual embeddings captured non-local sequence motifs critical for membrane interaction. |

| Broad-Spectrum Classifier (SVM) | MOE 2D Descriptors | ~3,000 compounds | 0.79 | LogP and polar surface area were top-ranked features. |

Protocols for Feature Generation & Evaluation

Protocol 3.1: Generating a Standardized 2D/3D Molecular Descriptor Set Using Open-Source Tools

Objective: To compute a comprehensive set of interpretable molecular descriptors for a library of small molecules. Materials: See Scientist's Toolkit. Procedure:

- Input Preparation: Compile SMILES strings of compounds in a

.csvfile. Ensure stereochemistry is specified if relevant. - 2D Structure Generation: Using RDKit in a Python script, parse SMILES and sanitize molecules (

rdkit.Chem.rdmolops). - Descriptor Calculation:

a. Calculate RDKit's 2D descriptors (

rdkit.Chem.Descriptorsmodule). b. For 3D descriptors, generate a 3D conformer usingrdkit.Chem.rdDistGeom.EmbedMolecule(). Optimize with MMFF94 (rdkit.Chem.rdForceFieldHelpers.MMFFOptimizeMolecule()). c. Calculate 3D descriptors (e.g., usingrdkit.Chem.Descriptors3Dormordredlibrary). - Output: Save descriptors as a

.csvmatrix (compounds x features).

Protocol 3.2: Creating Extended-Connectivity Fingerprints (ECFPs)

Objective: To generate circular, topology-based fingerprints that capture functional groups and molecular environments. Procedure:

- Start with sanitized RDKit molecule objects.

- Use

rdkit.Chem.AllChem.GetMorganFingerprintAsBitVect(mol, radius=2, nBits=1024).radius=2defines the diameter of the circular environment (ECFP4).nBits=1024defines the output vector length.

- For sparse feature use, consider the integer variant (

GetMorganFingerprint). - Validation: Visually inspect fragments for a few molecules using

rdkit.Chem.Draw.DrawMorganBit()to confirm chemical intuition.

Protocol 3.3: Feature Selection for Antimicrobial Models

Objective: To reduce dimensionality and identify the most predictive features for antimicrobial activity. Procedure:

- Pre-filtering: Remove features with near-zero variance or high correlation (>0.95).

- Univariate Selection: Apply ANOVA F-test between active/inactive classes. Retain top k features (e.g., k=100).

- Tree-Based Importance: Train a Random Forest classifier. Rank features by Gini importance or permutation importance.

- Embedded Methods: Use LASSO (L1) regularization within a logistic regression model to force sparsity.

- Final Set: Take the union or intersection of top features from methods 2-4, based on domain validation. Always evaluate final model performance on a held-out test set.

Visual Workflows & Pathways

Feature Engineering Workflow for AMR Models

GNN-Based Molecular Representation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Libraries for Molecular Feature Engineering

| Item (Software/Package) | Category | Primary Function in Protocol | Key Parameters/Notes |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics | Core molecule handling, 2D/3D descriptor calculation, fingerprint generation. | Use Chem.Descriptors, AllChem.GetMorganFingerprint. Critical for Protocols 3.1 & 3.2. |

| Mordred | Open-Source Descriptor Calculator | Calculates >1800 2D/3D molecular descriptors directly from SMILES. | Good for high-throughput batch calculation. Integrates with RDKit. |

| Open Babel / Pybel | Chemical File Conversion & Descriptors | File format interchange, calculation of basic descriptors, fingerprint options. | Useful for preprocessing diverse input formats. |

| Psi4 / Gaussian | Quantum Chemistry | Computing high-fidelity quantum chemical descriptors (HOMO/LUMO, MEP). | High computational cost. Used for specialized, high-accuracy features in Protocol 3.1. |

| DGL-LifeSci / PyTorch Geometric | Deep Learning Libraries | Building graph neural network (GNN) representations of molecules. | Essential for implementing state-of-the-art learned representations (see GNN diagram). |

| Scikit-learn | Machine Learning Library | Feature selection (ANOVA, LASSO), dimensionality reduction (PCA), model training. | Core for Protocol 3.3 (Feature Selection). |

| Pandas & NumPy | Data Manipulation | Handling feature matrices, data cleaning, and preprocessing. | Foundation for all data pipeline operations. |

Generative AI for de novo Molecular Design of Novel Antibiotics

This document serves as a detailed application note within a broader thesis investigating AI and machine learning for antimicrobial compound prediction. The accelerating crisis of antimicrobial resistance (AMR) necessitates novel approaches to antibiotic discovery. Traditional methods are costly and time-consuming. This protocol outlines the integration of generative AI models into a de novo molecular design pipeline to rapidly propose and prioritize novel, synthetically accessible antibiotic candidates with predicted activity against priority pathogens.

Core Generative AI Architectures & Quantitative Performance

Generative models learn the chemical space of known bioactive molecules and generate novel structures with optimized properties.

Table 1: Comparison of Generative AI Models for Molecular Design

| Model Architecture | Key Principle | Typical Output | Reported Performance (Novelty/Activity) | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| Variational Autoencoder (VAE) | Encodes molecules to latent space, decodes to generate. | SMILES strings, molecular graphs. | ~60-80% validity; >70% novelty in lead series. | Stable training, smooth latent space for optimization. | Can generate invalid strings; mode collapse possible. |

| Generative Adversarial Network (GAN) | Generator & Discriminator compete. | Molecular graphs. | High novelty; activity rates vary (10-30% in vitro hit rates in studies). | Can produce highly novel, complex structures. | Training instability; synthetic accessibility not guaranteed. |

| Reinforcement Learning (RL) | Agent learns policy to build molecules rewarded by property scores. | Sequential atom/bond addition. | Optimized for specific property (e.g., >0.5 QED, >0.8 predicted activity). | Direct optimization of multi-property objectives. | Computationally intensive; can exploit reward function. |

| Transformer | Attention-based sequence modeling. | SMILES strings (SELFIES preferred). | >90% validity with SELFIES; high scaffold diversity. | Captures long-range dependencies; state-of-the-art for sequences. | Large data requirements; black-box nature. |

| Flow-based Models | Invertible transformation between data and latent distributions. | 3D conformers, graphs. | High likelihood estimation; precise property control. | Exact latent-variable inference; high-quality samples. | Computationally expensive for 3D generation. |

Integrated Protocol: AI-DrivenDe NovoAntibiotic Design Workflow

Protocol 3.1: Data Curation & Preparation

Objective: Assemble a high-quality, chemically standardized dataset for model training and validation.

- Source Data: Collect SMILES strings and associated bioactivity data (e.g., MIC, IC50) from public repositories (ChEMBL, PubChem, DrugCentral). Focus on compounds tested against WHO priority pathogens (e.g., Acinetobacter baumannii, Pseudomonas aeruginosa).

- Standardization: Use RDKit (

Chem.SmilesMolSupplier,Chem.MolToSmiles) to standardize molecules: neutralize charges, remove salts, aromatize, and generate canonical SMILES. - Activity Thresholding: Label compounds as "active" (e.g., MIC ≤ 16 µg/mL) or "inactive" based on standardized microbiological criteria.

- Dataset Splits: Split into training (70%), validation (15%), and hold-out test (15%) sets, ensuring no structural analogues leak across splits using scaffold-based clustering (Butina clustering).

Protocol 3.2: Conditional Molecular Generation with a VAE-Transformer Hybrid

Objective: Generate novel molecules conditioned on desired antimicrobial properties.

- Model Setup: Implement a conditional VAE where the encoder and decoder are Transformer blocks. The condition (e.g., "Active against Gram-negative bacteria," desired logP range) is concatenated to the input embedding.

- Training: Train for 100-200 epochs using Adam optimizer (lr=1e-4) on the training set. Loss is a weighted sum of reconstruction loss (cross-entropy for SMILES) and KL-divergence loss (weight β=0.01).

- Generation: Sample random vectors from the latent space and concatenate with the desired condition vector. Pass through the decoder to generate SMILES sequences. Use beam search for decoding.

- Post-processing: Filter generated SMILES for chemical validity (RDKit

Chem.MolFromSmiles), uniqueness, and novelty (not in training set).

Diagram: AI-Driven Antibiotic Design Workflow

Diagram Title: Generative AI Antibiotic Discovery Pipeline

Protocol 3.3: In-Silico Filtration and Multi-Property Scoring

Objective: Prioritize generated molecules using predictive models and computational filters.

- Activity Prediction: Use a pre-trained graph neural network (GNN) QSAR model (e.g., on DeepChem) to predict pMIC against target pathogens. Filter for predictions above a defined threshold (e.g., predicted pMIC > 1.5).

- ADMET & Toxicity Screening: Predict key properties using toolkits like ADMETLab 2.0 or OSIRIS Property Explorer. Apply filters:

- Permeability: Predict Caco-2 permeability or P-gp substrate risk.

- Toxicity: Exclude molecules with predicted mutagenicity, hepatotoxicity, or hERG inhibition.

- PK: Favor molecules within defined logP (-1 to 5) and TPSA (<140 Ų) ranges.

- Synthetic Accessibility (SA): Calculate SAscore (0-10, easy-hard) using RDKit integration. Filter for SAscore < 6.

- Diversity Selection: Cluster remaining candidates using ECFP4 fingerprints and Taylor-Butina clustering. Select top-ranked molecules from diverse clusters for downstream analysis.

Table 2: Typical In-Silico Filtration Criteria for Antibiotic Candidates

| Property Category | Specific Metric | Target Range / Filter | Tool/Model |

|---|---|---|---|

| Predicted Potency | pMIC (vs. A. baumannii) | > 1.5 (equiv. MIC < ~32 µM) | GNN QSAR Model |

| Lipophilicity | LogP (Octanol/Water) | -1.0 to 5.0 | RDKit (Crippen) |

| Polar Surface Area | TPSA | < 140 Ų | RDKit |

| Synthetic Accessibility | SAscore | < 6.0 | RDKit/SAscore |

| Toxicity Risk | hERG inhibition prediction | Low risk (Probability < 0.5) | ADMETLab 2.0 |

| Toxicity Risk | Ames mutagenicity | Negative | ADMETLab 2.0 |

Protocol 3.4: Experimental Validation – Microbiological Assay

Objective: Confirm the antibacterial activity of AI-generated compounds.

- Compound Procurement: Select top 20-50 candidates for synthesis via contract research organization (CRO) or in-house medicinal chemistry.

- Broth Microdilution MIC Assay (CLSI Guidelines M07): a. Bacterial Strains: Use reference strains (e.g., E. coli ATCC 25922, P. aeruginosa ATCC 27853) and clinically resistant isolates. b. Preparation: Prepare cation-adjusted Mueller-Hinton broth (CAMHB). Dissolve test compounds in DMSO (final conc. ≤1% v/v). c. Plate Setup: Perform serial 2-fold dilutions of compounds in 96-well plates. Inoculate each well with ~5 x 10⁵ CFU/mL of mid-log phase bacteria. Include growth (no drug) and sterility (no inoculum) controls. d. Incubation & Reading: Incubate at 35°C for 16-20 hours. The MIC is the lowest concentration that completely inhibits visible growth.

- Cytotoxicity Assay (Counter-Screen): Perform parallel MTT or CellTiter-Glo assays on mammalian cell lines (e.g., HEK-293, HepG2) to determine selectivity index (SI = Cytotoxic CC₅₀ / MIC).

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Provider Examples | Function in Protocol |

|---|---|---|

| RDKit | Open-source (rdkit.org) | Core cheminformatics: molecule standardization, descriptor calculation, fingerprint generation, and chemical reaction handling. |

| DeepChem | Open-source (deepchem.io) | Provides out-of-the-box ML models (GraphConv, MPNN) for molecular property prediction and dataset management. |

| CHEMBL Database | EMBL-EBI | Curated bioactivity database essential for sourcing high-quality, annotated compound data for model training. |

| Cation-Adjusted Mueller Hinton Broth (CAMHB) | Thermo Fisher, Sigma-Aldrich, BD | Standardized medium for broth microdilution MIC assays, ensuring reproducibility. |

| CellTiter-Glo Luminescent Assay | Promega Corporation | Measures ATP as a marker for metabolically active cells, used for high-throughput cytotoxicity screening. |

| DMSO (Cell Culture Grade) | Sigma-Aldrich, HyClone | Universal solvent for reconstituting small molecule libraries for in vitro testing. |

| 96-Well Assay Plates (Tissue Culture Treated) | Corning, Greiner Bio-One | Standard vessel for performing high-throughput MIC and cytotoxicity assays. |

Diagram: Key AI Model Training & Validation Logic

Diagram Title: AI Model Training & Validation Cycle

This protocol demonstrates a viable, iterative pipeline integrating generative AI with computational filtration and experimental validation to accelerate the discovery of novel antibiotic leads. The continuous feedback of experimental results into model retraining, as framed within the larger thesis on AI for antimicrobial prediction, is critical for refining the generative process and improving the success rate of future design cycles.

Within the broader thesis on artificial intelligence and machine learning for antimicrobial compound prediction, this document presents detailed application notes and protocols for two pioneering case studies. These cases demonstrate the transition from in silico discovery to in vitro and in vivo validation, establishing a new paradigm in antibiotic development.

Case Study 1: Halicin – A Broad-Spectrum AI-Discovered Antibiotic

Discovery Workflow & Validation

Table 1: Halicin Discovery Pipeline and Key Validation Data

| Stage | Method / Assay | Key Quantitative Result | Significance |

|---|---|---|---|

| Training | Deep Neural Network (DNN) | Trained on 2,335 molecules with known growth inhibition of E. coli (Stokes et al., Cell, 2020). | Model learned chemical structures linked to antibacterial activity. |

| Screening | In silico prediction on Drug Repurposing Hub library (~6,000 compounds). | Halicin (SU-3327) ranked among top candidates with predicted anti-E. coli activity. | Identified a diabetic drug candidate with previously unknown antibacterial properties. |

| MIC Determination | Broth microdilution (CLSI M07-A10) | MIC against E. coli BW25113: 2 µg/mL. | Confirmed potent bactericidal activity. |

| In Vivo Efficacy | Murine neutropenic thigh infection model (A. baumannii). | ~3 log10 CFU reduction compared to vehicle control after 24h treatment (10 mg/kg, IP). | Demonstrated efficacy in a mammalian infection model. |

Experimental Protocols

Protocol 2.2.1: Primary In Vitro MIC Determination for Halicin Objective: Determine the minimum inhibitory concentration (MIC) against Gram-negative and Gram-positive bacteria using broth microdilution. Materials:

- Cation-adjusted Mueller-Hinton Broth (CAMHB)

- Sterile 96-well polystyrene microtiter plates

- Bacterial overnight cultures (e.g., E. coli ATCC 25922)

- Dimethyl sulfoxide (DMSO)

- Halicin stock solution (10 mg/mL in DMSO) Procedure:

- Prepare two-fold serial dilutions of Halicin in CAMHB across a 96-well plate (e.g., 64 µg/mL to 0.125 µg/mL). Include growth control (no drug) and sterility control (no inoculum).

- Dilute a log-phase bacterial culture to ~5 x 10^5 CFU/mL in CAMHB.

- Add 100 µL of the bacterial suspension to each well containing 100 µL of diluted drug, achieving a final inoculum of ~5 x 10^5 CFU/mL.

- Incubate plate at 37°C for 16-20 hours without shaking.

- The MIC is the lowest concentration of Halicin that completely inhibits visible growth.

Protocol 2.2.2: Assessment of Membrane Depolarization Objective: Evaluate Halicin's proposed mechanism of disrupting the bacterial proton motive force. Materials:

- Bacterial cell suspension in 5 mM HEPES, pH 7.2, with 5 mM glucose

- DisC3(5) fluorescent dye (3,3'-Dipropylthiadicarbocyanine iodide)

- Microplate reader (fluorescence mode: Ex/Em 622/670 nm)

- Carbonyl cyanide m-chlorophenyl hydrazone (CCCP) as positive control. Procedure:

- Harvest mid-log phase bacterial cells, wash, and resuspend in buffer to an OD600 of ~0.05.

- Load cells with 0.4 µM DisC3(5) for 30 minutes at room temperature.

- Distribute cell suspension into a black-walled microplate.

- Establish a baseline fluorescence reading for 2-5 minutes.

- Inject Halicin (at 10x MIC) and continue monitoring fluorescence for 20 minutes. Include CCCP control.

- A rapid increase in fluorescence indicates membrane depolarization.

Diagram 1: Proposed mechanism of Halicin action disrupting the proton motive force.

The Scientist's Toolkit: Key Reagents for Halicin Studies

Table 2: Essential Research Reagents

| Item | Function/Description |

|---|---|

| Halicin (SU-3327) | The AI-predicted, broad-spectrum antimicrobial compound; serves as the primary experimental agent. |

| Cation-Adjusted Mueller Hinton Broth (CAMHB) | Standardized growth medium for antimicrobial susceptibility testing (CLSI guidelines). |

| DisC3(5) Dye | Carbocyanine dye used as a potentiometric fluorescent probe for measuring membrane potential. |

| Carbonyl cyanide m-chlorophenyl hydrazone (CCCP) | Chemical uncoupler serving as a positive control for membrane depolarization assays. |

Case Study 2: AB-569 – A Potentiated Dual-Mechanism Drug Candidate

Discovery & Synergistic Action

Table 3: AB-569 Components, Discovery, and Synergy Data

| Component / Aspect | Detail | Quantitative Data / Rationale |

|---|---|---|

| Composition | Ethylenediaminetetraacetic acid (EDTA) + Sodium nitrite (NaNO2). | Optimized molar ratio for delivery and activity. |

| AI/ML Role | Pattern recognition in chemical and transcriptomic data suggested synergy between metal chelation and nitrosative stress pathways. | Identified non-obvious synergistic pair from database of FDA-approved substances. |

| Primary Target | Pseudomonas aeruginosa and other drug-resistant Gram-negative pathogens. | MIC for AB-569 vs. P. aeruginosa PAO1: 32-64 µg/mL (EDTA) + 8-16 mM (NaNO2). |

| Checkerboard Assay (FIC Index) | Used to quantify synergy between EDTA and NaNO2. | Fractional Inhibitory Concentration (FIC) Index consistently < 0.5, confirming strong synergy. |

| In Vivo Wound Model | P. aeruginosa biofilm-infected mouse wound. | AB-569 treatment reduced bacterial load by >99.9% (3 log10 CFU) compared to vehicle. |

Experimental Protocols

Protocol 3.2.1: Checkerboard Assay for Synergy Determination (AB-569) Objective: Determine the Fractional Inhibitory Concentration (FIC) index for the EDTA/NaNO2 combination. Materials:

- Sterile 96-well microtiter plate

- CAMHB

- Stock solutions: 10 mg/mL EDTA (pH 8.0), 1 M NaNO2 (in water).

- Bacterial inoculum (e.g., P. aeruginosa PAO1 at ~5 x 10^5 CFU/mL). Procedure:

- Prepare a two-dimensional dilution series. Add EDTA in doubling dilutions along the rows (e.g., Column 1-12: 256 to 0.5 µg/mL). Add NaNO2 in doubling dilutions down the columns (e.g., Row A-H: 64 to 0.125 mM).

- Add 50 µL of each EDTA concentration to all wells in its respective row.

- Add 50 µL of each NaNO2 concentration to all wells in its respective column.

- Add 100 µL of bacterial inoculum to each well. Final volume = 200 µL.

- Incubate 37°C, 18-24h. Determine the MIC of each agent alone and in combination.

- Calculate FIC Index: FIC = (MIC of A in combo / MIC of A alone) + (MIC of B in combo / MIC of B alone). FIC ≤ 0.5 = synergy.

Protocol 3.2.2: Biofilm Disruption Assay Objective: Quantify the effect of AB-569 on pre-established bacterial biofilms. Materials:

- 96-well polystyrene tissue culture plate

- Tryptic Soy Broth (TSB) with 1% glucose (for robust biofilm formation)

- Crystal Violet (0.1% w/v in water)

- Acetic acid (33% v/v)

- Microplate reader for OD595 measurement. Procedure:

- Grow biofilms by inoculating wells with 200 µL of a 1:100 dilution of an overnight culture in TSB+glucose. Incubate statically for 24-48h at 37°C.

- Gently aspirate planktonic cells and rinse wells twice with sterile PBS.

- Add fresh medium containing AB-569 (at sub-MIC and MIC levels), EDTA alone, NaNO2 alone, or vehicle control to the established biofilms.

- Incubate for an additional 24h.

- Aspirate, rinse, air-dry, and stain biofilms with 150 µL 0.1% Crystal Violet for 15 minutes.

- Rinse extensively with water, solubilize bound dye with 150 µL 33% acetic acid, and measure OD595.

Diagram 2: Synergistic dual-mechanism of AB-569 against bacterial cells.

The Scientist's Toolkit: Key Reagents for AB-569 & Synergy Studies

Table 4: Essential Research Reagents

| Item | Function/Description |

|---|---|

| Ethylenediaminetetraacetic Acid (EDTA), Disodium Salt | Metal chelator component of AB-569; disrupts outer membrane integrity by removing stabilizing divalent cations. |

| Sodium Nitrite (NaNO2) | Source of nitrosative stress; generates antimicrobial nitric oxide and related reactive species under acidic or reducing conditions. |

| Crystal Violet Stain | Quantitative dye for assessing total biofilm biomass remaining after antimicrobial treatment. |

| 96-Well Polystyrene, Flat-Bottom Plates | Standard substrate for consistent, high-throughput static biofilm formation assays. |

Discussion and Protocol Integration

These case studies provide validated protocols for the critical in vitro and mechanistic evaluation of AI-discovered antimicrobials. The workflow progresses from primary susceptibility testing (Protocol 2.2.1) to mechanistic probing (Protocol 2.2.2) and specialized assays for synergy (Protocol 3.2.1) and biofilm eradication (Protocol 3.2.2). This structured experimental cascade is essential for translating AI-generated predictions into credible therapeutic candidates, forming a core methodological component of the thesis on machine learning-driven antibiotic discovery.

Navigating the Data Desert: Overcoming Challenges in AI/ML Model Development

1. Introduction & Context Within the thesis "Integrative AI/ML Frameworks for Accelerated Antimicrobial Compound Prediction," the quality, quantity, and representativeness of training data constitute the primary bottleneck. This note details protocols to mitigate data scarcity, identify and correct bias, and standardize data for model generalization.

2. Quantitative Overview of Current Public Antimicrobial Data Landscapes Table 1: Key Public Data Sources for Antimicrobial AI (Status: 2024)

| Data Repository | Primary Content | Total Compounds (Approx.) | Assay Types | Notable Bias/Risk |

|---|---|---|---|---|

| ChEMBL (Antibacterial subset) | Bioactivity data (IC50, MIC, etc.) | ~1.2M measurements for ~400k compounds | Biochemical, whole-cell phenotypic | Over-representation of synthetic, lipophilic compounds; inconsistent MIC protocols. |

| PubChem AID 1117321 (NIAID) | Phenotypic screening outcomes | ~300,000 compounds | Whole-cell anti-bacterial (MRSA, PA) | Binary active/inactive labels; limited mechanistic and pharmacokinetic data. |

| NDARO / CARD | Antimicrobial resistance gene sequences | N/A (Sequence database) | Genomic | Bias towards clinically prevalent pathogens; under-sampling of environmental resistome. |

| DeepARG Database | Predicted ARG sequences from metagenomics | ~30,000 protein sequences | Computational prediction | False positive risk from homology-based annotations. |

3. Experimental Protocols

Protocol 3.1: Curating a Standardized MIC Training Dataset from Heterogeneous Sources Objective: To create a standardized, machine-readable dataset for model training from published literature and database entries. Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Data Retrieval: Query ChEMBL via API (e.g., using

chembl_webresource_client) for targets (e.g., "Penicillin-binding protein") and organisms (e.g., "Staphylococcus aureus"). - Harmonization: Convert all inhibitory values (IC50, Ki, MIC) to molar units (nM). For MIC values reported in µg/mL, apply molecular weight conversion.

- Strain Standardization: Map all reported bacterial strains to their standard ATCC or NCTC reference numbers using a curated lookup table.

- Quality Filtering: Remove entries where:

- Assay confidence score (in ChEMBL) is < 8.

- The compound is flagged as "Pan-Assay Interference Compound (PAINS)" using a standard filter set (e.g., RDKit implementation).

- The reported MIC value is an outlier (>3 standard deviations) for that compound-strain pair across studies.

- Data Structuring: Output a standardized CSV/JSON file with mandatory fields:

Compound_SMILES,Standard_Strain_ID,MIC_nM,pH,Assay_Medium,Citation_PMID.

Protocol 3.2: Bias Detection via Chemical Space PCA and Clustering Objective: To visually and quantitatively assess chemical diversity and potential bias in a compound library. Procedure:

- Descriptor Calculation: For all SMILES strings in the dataset, compute 200-dimensional molecular fingerprints (e.g., Morgan fingerprints, radius=2) using RDKit.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the fingerprint matrix to reduce dimensions to the top 3 principal components (PCs).

- Clustering: Apply k-means clustering (k=5-10) to the PCA-reduced data.

- Bias Analysis:

- Plot PC1 vs. PC2, coloring points by cluster and data source (e.g., ChEMBL vs. in-house).

- Calculate the percentage of compounds from each source residing in each cluster.

- Bias Alert: If >70% of compounds from a single source occupy <30% of the defined chemical space clusters, the dataset is chemically biased.

4. Visualization: Data Curation and Bias Mitigation Workflow

Diagram Title: AI Antimicrobial Data Curation and Bias Mitigation Pipeline

5. Visualization: Antimicrobial Resistance Prediction Data Flow

Diagram Title: Feature Engineering for Antimicrobial Resistance Gene Prediction

6. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Data-Centric Antimicrobial AI Research

| Item / Reagent | Supplier Examples | Function in Protocol |

|---|---|---|

| ChEMBL WebResource Client | European Molecular Biology Laboratory | Python library for programmatic access to curated bioactivity data (Protocol 3.1). |

| RDKit | Open Source Cheminformatics | Calculates molecular descriptors, fingerprints, and performs PAINS filtering (Protocols 3.1, 3.2). |

| ATCC / NCTC Strains | ATCC, BEI Resources, NCTC | Provides standardized reference bacterial strains for assay harmonization and validation. |

| Mueller Hinton Broth (CAMHB) | Sigma-Aldrich, BD Diagnostics | Standardized medium for performing Clinical & Laboratory Standards Institute (CLSI) compliant MIC assays. |

| Pan-Assay Interference Compounds (PAINS) Filters | RDKit Implementation | Computational filter to remove compounds with promiscuous, non-specific bioactivity patterns from training sets. |

| scikit-learn | Open Source ML Library | Performs PCA, clustering (k-means), and other data preprocessing/analysis steps (Protocol 3.2). |

Mitigating Overfitting and Improving Model Generalizability

1. Introduction: The Challenge in AI-Driven Antimicrobial Discovery Within AI/ML research for antimicrobial compound prediction, a core challenge is developing models that perform well on novel, structurally diverse compounds not seen during training. Overfitting—where a model learns spurious patterns from limited or biased training data—severely compromises generalizability. This document provides application notes and protocols to mitigate these issues, ensuring robust predictive performance in real-world drug discovery pipelines.

2. Key Quantitative Data on Regularization Techniques

Table 1: Efficacy of Regularization Methods on AMR Compound Prediction Performance

| Method | Test Set Accuracy (%) | Test Set AUC-ROC | Generalization Gap (Train-Test AUC Drop) | Key Hyperparameter(s) |

|---|---|---|---|---|

| Baseline (No Regularization) | 92.5 ± 1.2 | 0.945 ± 0.015 | 0.121 | N/A |

| L1/L2 Weight Decay | 90.8 ± 0.9 | 0.932 ± 0.010 | 0.065 | λ=0.001 |

| Dropout (p=0.5) | 91.5 ± 1.1 | 0.938 ± 0.012 | 0.045 | Dropout Rate |

| Early Stopping | 91.0 ± 1.3 | 0.935 ± 0.014 | 0.035 | Patience=20 epochs |

| Data Augmentation (SMILES) | 93.2 ± 0.8 | 0.958 ± 0.008 | 0.025 | N/A |

| Label Smoothing | 90.9 ± 0.7 | 0.934 ± 0.009 | 0.055 | α=0.1 |

Table 2: Impact of Dataset Curation on Model Generalizability

| Dataset Characteristic | Model (GNN) AUC on External Validation Set | Notes |

|---|---|---|

| Small, Homogeneous (n=2,000) | 0.62 ± 0.05 | High variance, poor generalization |

| Large, But Biased (Source: Single Pharma Library) | 0.75 ± 0.03 | Fails on natural product scaffolds |

| Curated with Cluster Splitting* | 0.88 ± 0.02 | Robust scaffold generalization |

| Curated with Temporal Splitting | 0.85 ± 0.03 | Simulates real-world temporal drift |

Splitting such that structurally similar compounds are not in both train and test sets. *Training on compounds discovered before a cutoff date, testing on those after.

3. Experimental Protocols

Protocol 3.1: Implementing Scaffold Split for Rigorous Evaluation Objective: To evaluate model performance on novel molecular scaffolds, preventing over-optimistic estimates from random splits. Materials: Compound dataset (e.g., from PubChem), RDKit (Python library), Scikit-learn. Procedure:

- Data Preprocessing: Standardize molecules using RDKit (neutralize charges, remove salts, generate canonical SMILES).

- Scaffold Generation: For each compound, extract the Bemis-Murcko scaffold (the core ring system with linker atoms).

- Cluster by Scaffold: Group all compounds that share an identical scaffold.

- Stratified Split: Sort scaffold clusters by size. Using an iterative algorithm, assign clusters to training (70-80%), validation (10-15%), and test (10-15%) sets, aiming to balance the distribution of bioactivity classes across splits while ensuring no scaffold is present in more than one split.

- Model Training & Evaluation: Train model on the training set. The validation set is used for hyperparameter tuning. The final performance is reported only on the test set containing entirely novel scaffolds.

Protocol 3.2: SMILES-based Data Augmentation for Molecular Datasets Objective: To artificially increase the size and diversity of training data for SMILES- or string-based models (e.g., LSTMs, Transformers). Materials: SMILES strings of training set compounds, Python. Procedure:

- Canonicalization: Generate a canonical SMILES representation for each training compound using a tool like RDKit.

- Randomization: For each epoch during training, generate randomized SMILES representations of the same molecule. RDKit can typically produce many valid, different SMILES strings for the same structure.

- Augmentation: For each molecule in a training batch, replace its SMILES with a randomly generated variant. This teaches the model that the molecular identity is invariant to SMILES permutation.

- Model-Specific Integration: For sequence models, this is applied directly. For graph-based models (GNNs), first convert the augmented SMILES back to a molecular graph for featurization.

Protocol 3.3: Cross-Validation with Nested Scaffold Splits Objective: To obtain a reliable and generalizable estimate of model performance while tuning hyperparameters. Materials: As in Protocol 3.1. Procedure:

- Outer Loop (Performance Estimation): Perform k-fold (e.g., k=5) scaffold splitting on the entire dataset (as per Protocol 3.1). This creates k pairs of (train+validation, test) splits with distinct test scaffolds.

- Inner Loop (Hyperparameter Tuning): For each outer fold, take the combined train+validation portion. Perform another j-fold (e.g., j=3) scaffold split on this subset to create (innertrain, innervalidation) sets.

- Tuning: Train models with different hyperparameter configurations on each innertrain set and evaluate on the corresponding innervalidation set. Select the best average performing hyperparameter set.

- Final Evaluation: Train a final model on the entire outer fold's train+validation set using the best hyperparameters. Evaluate it on the held-out outer test fold.

- Reporting: The final performance is the average metric across all k outer test folds.

4. Visualizations

Diagram 1: Protocol for Robust Model Evaluation

Diagram 2: Nested Cross-Validation Workflow

5. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust AI/ML in Antimicrobial Discovery

| Item / Solution | Function & Rationale |

|---|---|

| RDKit (Open-source) | Core cheminformatics toolkit for molecule standardization, scaffold generation, fingerprint calculation, and SMILES manipulation. Critical for data curation and featurization. |

| DeepChem Library | Open-source ML library specifically for drug discovery. Provides scaffold split functions, graph neural network models, and hyperparameter tuning frameworks. |

| TensorFlow/PyTorch with Weight & Activation Monitoring (e.g., TensorBoard, Weights & Biases) | ML frameworks with visualization tools to monitor for signs of overfitting (e.g., diverging train/test loss curves, exploding weights). |

| Chemical Checker or MoleculeNet | Benchmarks and pre-processed molecular datasets with standardized splits. Provides a baseline for comparing generalizability techniques. |

| Scikit-learn | Provides essential utilities for metrics, standard data splits, and simple models for baseline comparisons. |

| DOCK or AutoDock Vina (Optional) | Molecular docking software. Used to generate physics-based features (e.g., binding energy, pose) as complementary inputs to ML models, potentially improving generalization. |

| PubChem BioAssay & ChEMBL Databases | Primary sources for experimental bioactivity data. Essential for building diverse, high-quality training datasets. Temporal splitting can be performed using deposition dates. |

Within AI-driven antimicrobial compound prediction research, the reliance on complex "black box" models like deep neural networks poses a significant barrier to scientific trust and clinical adoption. This document provides application notes and protocols for implementing interpretability and explainability (I&E) techniques. The goal is to make model decisions transparent, actionable, and biologically plausible, thereby advancing the broader thesis that explainable AI is critical for accelerating the discovery of novel antimicrobial agents.

The following techniques, when applied to antimicrobial prediction models, offer varying insights. Performance data is synthesized from recent literature (2023-2024) benchmarking these methods on tasks like Minimum Inhibitory Concentration (MIC) prediction and compound mechanism-of-action classification.

Table 1: Comparative Performance of I&E Techniques in Antimicrobial Prediction Tasks

| Technique Category | Specific Method | Primary Insight Generated | Quantitative Fidelity Metric (Avg.) | Computational Cost | Biological Plausibility |

|---|---|---|---|---|---|

| Feature Importance | SHAP (TreeExplainer) | Per-prediction contribution of molecular features/descriptors | Prediction Score Delta: 0.85 (AUC) | Medium | High |

| Feature Importance | Integrated Gradients | Attribution for neural net models using molecular graphs | Area Under Convergence Curve: 0.78 | High | Medium |

| Surrogate Models | LIME (Local) | Local linear approximation of model decision boundary | Local Fidelity: 0.82 (R²) | Low | Medium |

| Intrinsic | Attention Weights (GNNs) | Atom/bond importance in graph-based models | Attention Weight Entropy: 1.4 (nats) | Low | Medium-High |

| Example-Based | Counterfactual Explanations | Minimal change to lead compound that flips prediction | Validity Rate: 91% | High | High |

| Rule Extraction | Skope-Rules | Human-readable IF-THEN rules from tree ensembles | Rule Precision: 88% | Medium | High |

Detailed Experimental Protocols

Protocol 3.1: SHAP Analysis for Tree-Based Antimicrobial Susceptibility Predictors

Objective: To explain predictions of a Random Forest model classifying compounds as "Active" or "Inactive" against a target pathogen.

Materials: Trained Random Forest model, test set of molecular fingerprints (e.g., ECFP4), shap Python library.

Procedure:

- Initialization: Load the trained model and a representative sample of the training data (background dataset, n=100).

- Explainer Instantiation:

explainer = shap.TreeExplainer(model, background_data). - SHAP Value Calculation: For a specific compound of interest (or the test set), compute SHAP values:

shap_values = explainer.shap_values(compound_fingerprint). - Visualization & Interpretation:

- Generate a force plot for single prediction:

shap.force_plot(explainer.expected_value, shap_values[1], compound_fingerprint). - Generate a summary plot for global feature importance:

shap.summary_plot(shap_values, feature_names=fingerprint_bit_names).

- Generate a force plot for single prediction:

- Biological Validation: Map high-importance fingerprint bits to specific chemical substructures (e.g., beta-lactam ring, quinolone core). Cross-reference these substructures with known pharmacophores in antimicrobial databases.

Protocol 3.2: Counterfactual Explanation Generation for Lead Optimization

Objective: Identify minimal, synthetically feasible modifications to an active compound that would cause the model to predict loss of activity, thereby hypothesizing critical functional groups.

Materials: Trained deep learning model (e.g., Graph Neural Network), starting active compound (SMILES string), DiCE or CLEAR Python library.

Procedure:

- Define Constraints: Specify molecular constraints (e.g., maximum molecular weight change = 50 g/mol, allowed atom types, preserve scaffold core).

- Initialize Generator: Use the

DiCEinterface to initialize a counterfactual generator with the trained model and constraints. - Generate Counterfactuals: Request a set of diverse counterfactuals (e.g., n=5):

counterfactuals = generator.generate_counterfactuals(starting_smiles, total_CFs=5, desired_class="inactive"). - Analysis: Analyze the structural differences between the original active compound and each generated inactive counterfactual. Common changes (e.g., removal of a hydroxyl group, addition of a bulky substituent) highlight model-deemed critical features.

- Synthetic & Experimental Planning: Propose the synthesis and testing of the counterfactual compounds to validate the model's learned structure-activity relationship.

Protocol 3.3: Attention Mechanism Analysis in Graph Neural Networks

Objective: To interpret a GNN's prediction of a compound's Mechanism of Action (MoA) by visualizing atom- and bond-level attention. Materials: Trained GNN with attention layers (e.g., GAT, Attentive FP), molecular graph data. Procedure:

- Forward Pass with Attention Capture: Perform a forward pass of a test compound through the GNN while storing the attention weights from all layers and heads.

- Attention Weight Aggregation: Aggregate attention weights across layers and heads using a method like mean or sum.

- Graph Coloration: Map the aggregated attention scores for atoms and bonds onto the original molecular graph. Use a continuous color scale (e.g., blue=low attention, red=high attention).

- Pattern Recognition: Visually inspect multiple correctly predicted examples for a given MoA class (e.g., DNA gyrase inhibition). Identify if the model consistently attends to known critical regions of the molecule (e.g., the core binding motif).

- Quantitative Validation: Calculate the overlap between top-attended atoms and known essential substructures from co-crystallized ligand-protein structures in the PDB.

Visualization of I&E Workflows

Title: Interpretability Workflows for Antimicrobial AI Models

Title: Attention-Based Explainability in a GNN

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for I&E in Antimicrobial AI Research

| Category | Item / Software / Database | Function in I&E Experiments | Key Considerations |

|---|---|---|---|